Stop Paying the Zero-Initiative Tax and Switch to Proactive AI

You sit down with your coffee. You open your product backlog. There are 140 untriaged feature requests, bug reports, and sales notes from the past week. You open Microsoft Copilot. The cursor blinks.

It waits for you. You have to write the perfect prompt to get any value out of it. You have to tell it exactly which documents to read, which Jira epics to reference, and what format you want. You have to recall the exact name of the feedback file from yesterday.

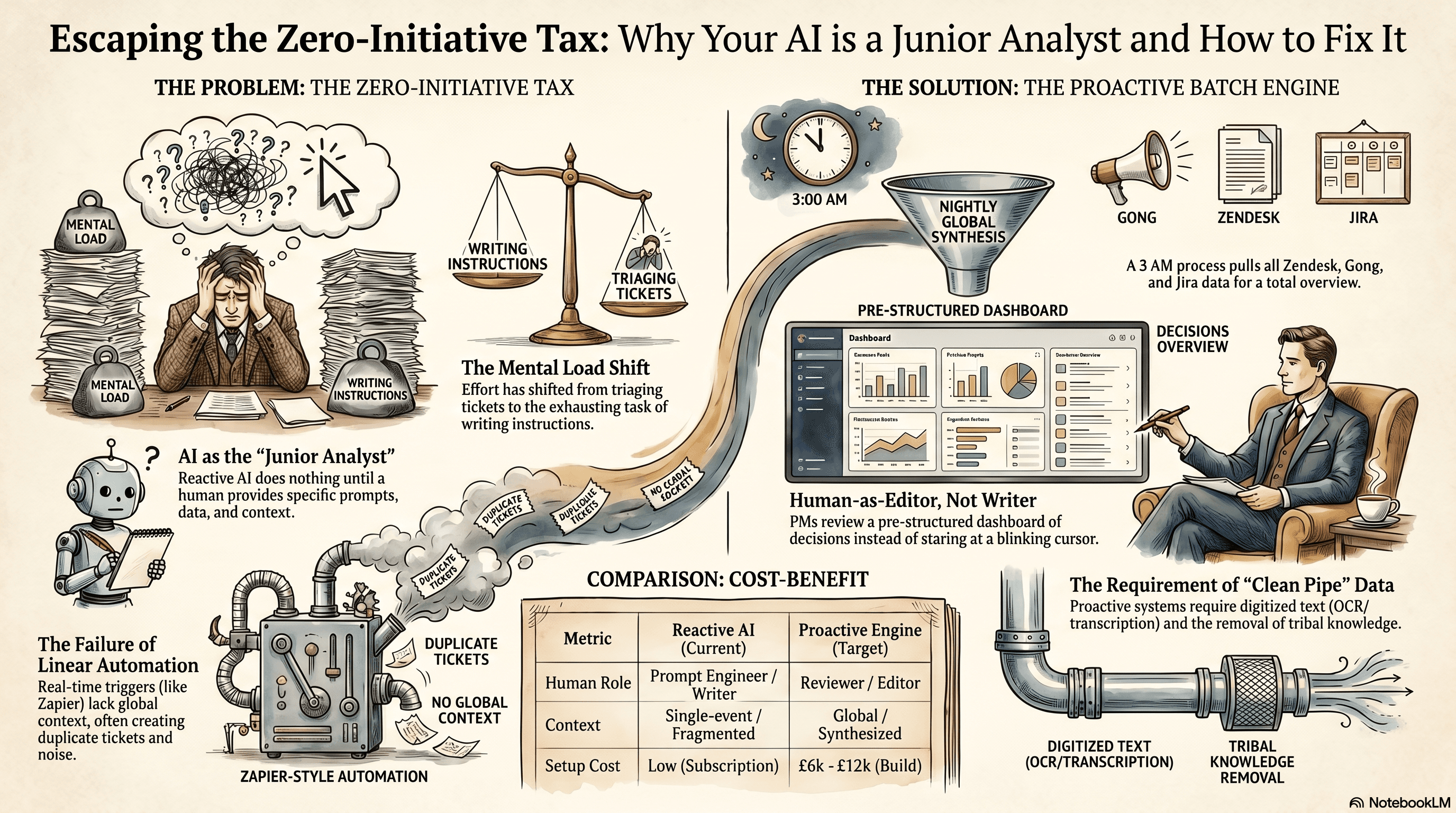

That's the reality of product management right now. We bought AI to do the heavy lifting. We ended up as full-time prompt engineers instead. The mental load hasn't decreased. It just shifted from reading tickets to writing instructions. It's exhausting.

The zero-initiative tax

The zero-initiative tax forces humans to spend significant time manually gathering data and context to compensate for the AI's lack of agency.

The zero-initiative tax is the hidden cost of paying for AI tools that do absolutely nothing until a human explicitly tells them what to do. You pay for the software subscription, but you also pay with the mental load of driving every single interaction.

Most SMEs roll out Microsoft Copilot or standard ChatGPT subscriptions and expect magic. They think the AI will act like a senior product manager. They expect it to spot trends in customer feedback or highlight a critical bug before it blows up.

But reactive AI doesn't work like that. It sits there like a junior analyst on their first day. If you don't hand them a highly specific, perfectly scoped task, they just stare at the wall. They have no agency. They don't proactively check the systems.

This tax hits product teams the hardest. Product management is entirely about synthesising chaos. You have inputs coming from Zendesk, Gong transcripts, Slack channels, and direct emails. The volume of unstructured data is staggering.

When your AI is reactive, the burden of gathering all that context falls entirely on you. You have to find the data, copy it, paste it into the chat box, and ask the right question. You have to know what you're looking for before you even start typing.

By the time you do all that, you could've just triaged the backlog yourself. The tool is supposed to save you time, but the orchestration effort cancels out the efficiency gains. You're just swapping backlog management for prompt management. End of.

Why the obvious fix fails

The obvious fix fails because founders try to force reactive AI to process data linearly, completely missing the need for global context. When founders realise the zero-initiative tax is bleeding their product team dry, they usually try to automate their way out. They string together Zapier flows. They connect Zendesk to OpenAI, and OpenAI to Jira.

The logic seems sound. A new customer ticket comes in. Zapier triggers an OpenAI prompt to summarise it. Zapier then creates a new Jira ticket with the summary. I see this exact setup constantly.

Here's what actually happens. Zapier processes data linearly, one trigger at a time. It has no global context. It can't step back and look at the whole board. It just blindly reacts to the webhook in front of it.

If five customers report the same checkout bug in one hour, Zapier runs five separate times. It creates five duplicate Jira tickets. It doesn't know they're related. The AI silently executes its isolated task and clogs your backlog with noise. It turns a minor reporting spike into an administrative nightmare.

Then there's the Copilot approach. Teams try to use Copilot in Microsoft 365 to summarise long threads. But Copilot is heavily constrained by the immediate context window. You ask it to find themes across 50 emails, and it often just gives you generic bullet points that miss the technical nuance. It skips the edge cases.

The contrarian truth is that throwing more reactive AI at a context problem breaks your systems faster. You don't need a smarter model. You need a different trigger mechanism.

You need the system to stop reacting to single events. You need it to read the whole landscape asynchronously, while you sleep. Relying on real-time, single-event triggers is exactly how you ruin a perfectly good product backlog.

The approach that actually works

This n8n architecture uses Claude 3.5 Sonnet to batch-process feedback, turning unstructured data from multiple sources into actionable product decisions by morning.

The approach that actually works replaces real-time reactive prompts with an asynchronous, batch-processing engine that runs overnight. To fix this, you have to shift from reactive tools to a proactive system. You build an engine that presents you with decisions in the morning. This mirrors what OpenAI is doing with ChatGPT Pulse for personal use, but wired directly into your company operations.

Here's the exact mechanism. You stop triggering automations on every single email. Instead, you run a batch process.

At 3 AM, an n8n cron job fires. It pulls all new Zendesk tickets, the last 24 hours of Gong call transcripts, and your current active Jira backlog via API. It gathers the entire context window of your product's reality. It doesn't miss a single data point.

The n8n workflow then makes a single, massive API call to Claude 3.5 Sonnet. You use Claude because its context window handles large text dumps beautifully without losing details in the middle. You pass it a strict JSON schema.

You instruct Claude to cluster the new feedback, compare it against the existing Jira epics, and output a structured JSON array. The output must contain proposed new tickets for novel issues, or flag existing Jira ticket IDs that need their priority bumped based on the new data.

Finally, n8n parses that JSON. It doesn't write straight to Jira. Instead, it creates a clean review dashboard in Notion or Airtable.

When your product manager logs in at 8 AM, they don't face a blinking cursor. They see a dashboard. "Three enterprise clients reported the checkout bug. I suggest bumping Jira Epic 402 to P1. Approve?"

They click a checkbox, and a secondary n8n webhook executes the final Jira update. The human remains in the loop as an editor, not a writer.

This build takes about two to three weeks. It costs roughly £6k to £12k depending on how messy your current API permissions are. It pays for itself in the first month.

The main failure mode is hallucination. LLMs love to invent Jira ticket IDs that don't exist. You catch this by forcing the n8n workflow to validate every ticket ID against the Jira API before it writes to Notion. If the ID bounces, the workflow flags it for human review.

Where this breaks down

This proactive architecture breaks down entirely if your company relies on undocumented tribal knowledge or unstructured, non-text data. The approach is incredibly powerful, but it requires clean, digitised text. If your inputs are messy, the system chokes. You can't automate a process that relies on tribal knowledge.

If your sales team refuses to use Gong and just leaves vague voice notes in Pipedrive, the AI has nothing to read. If your user feedback comes in as scanned PDFs or screenshots of error messages without text, the error rate jumps from 1% to ~15%.

You need OCR for images and transcription for audio before you even attempt this build. If you try to feed raw, unstructured files into a batch prompt, the token cost spikes and the output quality drops. The AI will spend all its compute trying to parse the mess instead of analysing the product themes.

You also can't use this for highly technical hardware debugging. If a bug requires reading a stack trace, understanding a physical manufacturing tolerance, or interpreting a proprietary log file, an LLM will confidently guess and get it wrong.

Check your data formats before you spend a single pound on API calls. If your team communicates primarily through undocumented phone calls and whiteboard sketches, fix your culture first. AI can't read your mind.

What to do now

You don't need to spend £10k tomorrow to test this concept. You can start shifting your product team from reactive to proactive this week with tools you already pay for.

- Audit your feedback channels: Open your Zendesk or shared support inbox. Count how many distinct places customer feedback lands. If it's more than three, consolidate them first. AI needs a single, clean pipe to read from.

- Test batch prompting manually: Before building an n8n workflow, do it by hand. At the end of your sprint, export your weekly support tickets to a CSV. Upload it to ChatGPT or Claude. Ask it to cluster the issues and map them to your current sprint goals. See if the output is actually useful.

- Restrict your Zapier triggers: If you currently have Zapier creating Jira tickets automatically from emails, turn it off. Change the action step to drop the summaries into a single Slack channel or a Notion page instead. Force a human to review the batch before it pollutes your backlog.

- Explore proactive settings: If you have access to ChatGPT Pulse on mobile, turn it on and connect your Google Workspace. Watch how it changes your morning routine. Experience what it feels like when the AI brings you answers before you ask the question. That's the standard you want for your company operations.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.