Closing the Prompt-to-Production Gap in AI Video Marketing

You sit down with your morning coffee, open LinkedIn, and see the feed flooded with hyper-realistic AI videos. A golden retriever walking through a neon-lit Tokyo street. A cinematic drone shot of a rugged coastline. OpenAI has just made Sora available to ChatGPT Plus users [[source](https://openai.com/blog/sora-availability)].

Your marketing director forwards you the link with a quick message. She asks if you can finally cancel that £3,000 monthly retainer with your freelance videographer. It looks like the end of expensive shoots, location scouting, and endless post-production delays.

You type a quick reply, feeling like you just found a massive shortcut for your Q3 campaigns. You imagine slashing your content creation costs overnight. The reality is much messier.

The prompt-to-production gap

The prompt-to-production gap is the hidden expanse of human labour required to turn a raw AI video clip into a finished marketing asset that actually sells your product. You type a prompt into the interface. You generate a stunning clip of a coffee cup steaming on a wooden table. It looks perfect at first glance.

Then you look closer and realise the cup is the wrong shape. The steam moves backwards. There is no logo on the ceramic. You cannot just drop this raw file into a Facebook ad campaign and expect it to convert. Raw footage is only the first ten percent of the work.

Marketing teams assume the AI replaces the entire creative supply chain. It does not. It only replaces the camera and the physical location. You still need an editor to cut the clips, a colourist to match the grades, a sound designer to add audio, and a copywriter to script the voiceover.

The hidden costs of generative AI in production are staggering when you factor in this extra labour [[source](https://www.marketingweek.com/generative-ai-video-production-costs-2025/)]. SMEs expect their marketing budget to drop by half overnight. Instead, they end up paying the exact same amount.

The budget simply gets reallocated. You stop paying camera operators and start paying prompt engineers and post-production editors to fix AI hallucinations. The gap swallows the savings entirely. You trade the cost of a physical shoot for the cost of endless digital revisions.

Why the ChatGPT subscription misses the mark

Handing a ChatGPT Plus subscription to a junior marketing executive fails because generative AI lacks the deterministic control required for brand consistency. Most SME owners try this obvious route first. You buy the subscription, hand it over, and ask for a 30-second promotional video. You expect a polished asset by the end of the week.

This fails because models like Sora do not understand object permanence. You ask for a shot of a woman using your software on a laptop in a modern office. It gives you a beautiful, cinematic clip. Then you need a second shot of the same woman smiling directly at the camera.

The model generates a completely different person. The lighting has changed from warm morning sun to harsh office fluorescent. The laptop is now a bizarre hybrid of a MacBook and a toaster.

You cannot build a cohesive brand narrative when your main character morphs into a new human every three seconds. The pattern I keep seeing is clear. Trying to force an AI video model to act like a traditional film set is a massive waste of time.

In my experience, your junior executive will spend forty hours tweaking prompts trying to get a matching shot. They will add negative prompts, adjust seed numbers, and read endless Reddit threads looking for a workaround. They will try to force the tool to do something it hates doing.

That is forty hours of salary burned on a task that a human videographer could have shot in ten minutes. The tool is designed for infinite variation, not strict continuity. When you fight the model's core architecture, you lose.

You end up with a folder full of disconnected, slightly uncanny clips. Your team then tries to stitch them together in Premiere Pro. They try masking the errors with heavy text overlays and fast cuts. The final result looks cheap, disjointed, and entirely unprofessional.

Building a hybrid video pipeline

This hybrid approach uses AI for atmospheric background elements while retaining human-shot footage for the core product message and brand identity.

The only AI video approach that actually works for SMEs treats generative models as a stock footage engine rather than a human director. You do not ask the AI to generate your entire advert. You use it to fill the visual gaps between your core, human-shot assets.

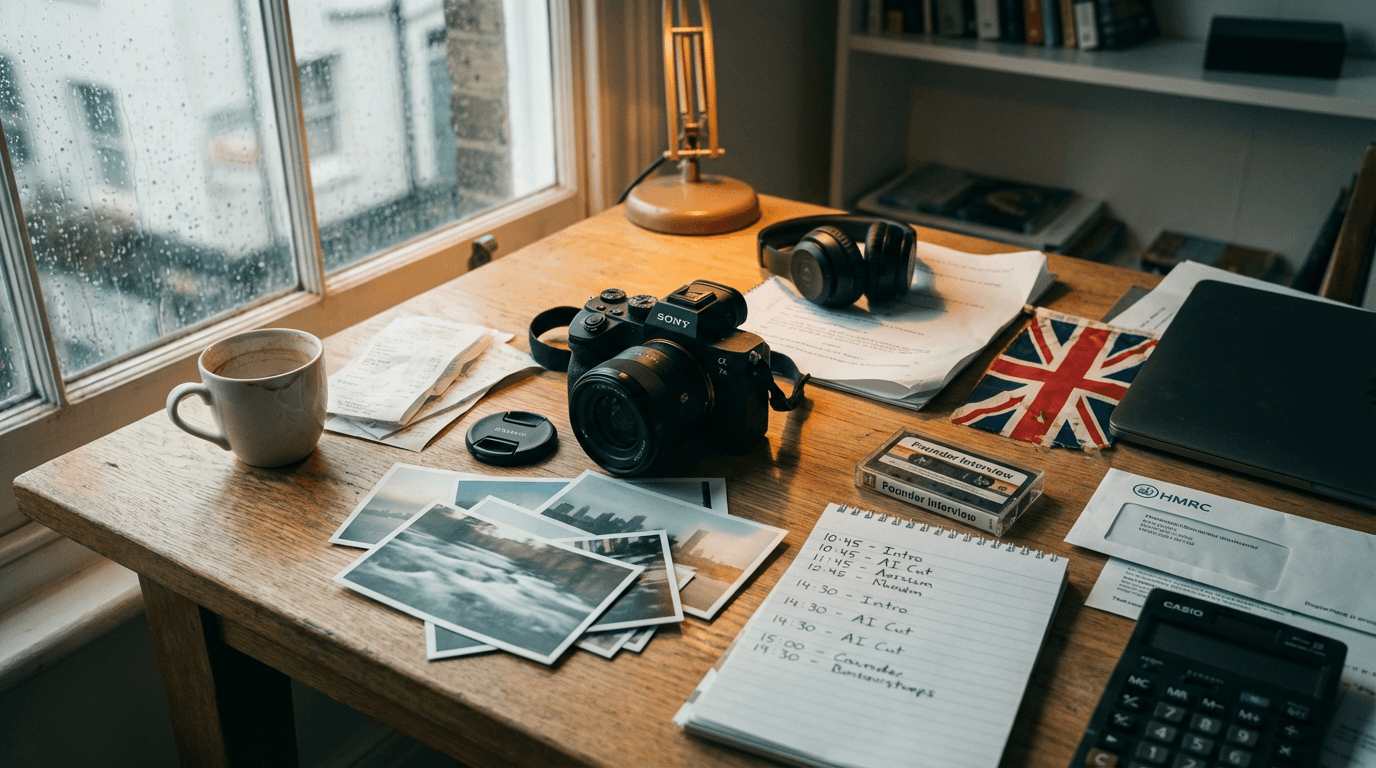

Here is what a functional pipeline looks like in practice. You start by filming your founder or product on a high-quality camera. This is your hero footage. It guarantees absolute brand accuracy, correct lighting, and genuine human empathy.

Next, you map out the b-roll requirements. You need a shot of a busy London street, a close-up of a clock ticking, and an abstract data visualisation. Instead of buying expensive stock footage subscriptions, you generate these specific, isolated clips using OpenAI Sora.

You feed the raw Sora clips directly into Adobe Premiere Pro. Because these are background elements, the lack of strict character consistency does not matter at all. The viewer only sees them for two seconds before the cut.

You can even automate the b-roll prompting. An n8n webhook watches your Notion storyboard database. When a scene is marked as ready, it triggers a Claude API call with a strict JSON schema to write an optimised Sora prompt. That prompt drops right back into the Notion card.

For the audio layer, you route your script through ElevenLabs to generate a scratch voiceover. This allows your editor to pace the video perfectly against the visuals. You only pay a human voice actor for the final recording once the edit is locked.

The operational flow is tight and predictable. Your editor pulls the hero footage from Google Workspace, drops the Sora b-roll onto the timeline, and uses Frame.io to share the draft with the marketing director. The review cycle focuses on pacing, not fixing AI mistakes.

Setting up this hybrid workflow takes about two weeks of process mapping and training. It costs between £3,000 and £5,000 in software licenses, API limits, and initial editor training depending on your existing tech stack.

The most common failure mode here is over-generation. Your team gets distracted by the novelty of the AI and generates five hundred clips they do not actually need. You catch this by enforcing strict, written storyboards before anyone is allowed to open the prompt interface. If a shot is not on the storyboard, it does not get generated.

When to keep the cameras rolling

Generative video breaks down completely if your product category requires exact visual representation to prevent customer returns. This hybrid approach is not universal. You need to check your product category before committing to any AI video workflow.

Look at a fashion retailer selling a specific cut of a waterproof jacket. The AI does not know the exact seam placement. It does not know the precise shade of navy blue. It cannot predict how the proprietary fabric folds when the model walks.

It will hallucinate these details entirely. The jacket will look slightly different in every single frame. When a customer buys the item based on the video and receives a product that looks different, your return rate will spike.

The same applies to complex machinery, software interfaces, or architectural plans. If the visual truth of the product is the primary selling point, you must use a real camera. Generative tools are for mood, context, and background texture. They are not product photographers.

Before you cancel your next shoot, look at your storyboard. If the core message relies on showing exactly what the customer will receive in the post, keep the cameras rolling.

The rush to cut costs with generative video is based on a fundamental misunderstanding of how marketing assets are built. You are not paying your production agency just to point a lens at a subject. You are paying them for narrative control, brand consistency, and the sheer operational friction of turning raw media into a persuasive argument. Handing a powerful AI tool to an untrained team does not eliminate that friction. It just shifts the bottleneck from the film set to the editing suite. The prompt-to-production gap remains the true cost of doing business. The question is not whether AI can generate a photorealistic video of a dog in Tokyo. It is whether you understand exactly which parts of your creative pipeline actually require human taste, because that is the only thing stopping your brand from looking like everyone else.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.