Scaling E-commerce: How to Localise Product Catalogues Without Data Errors

You just signed a logistics deal to ship your products to France and Germany. Your shipping rates are locked. Your payment gateways are ready. Now your ops manager is staring at an export file of 4,000 Shopify SKUs, wondering how to translate the entire catalogue by the end of the week.

The immediate instinct is to copy and paste everything into a free web translator. Then someone points out the thousands of bullet points, bold tags, and hyperlink structures embedded in your product descriptions. You realise this is not a language problem. It is a data pipeline problem.

You need a programmatic way to turn English e-commerce data into German and French e-commerce data without destroying the layout. The two heavyweights for this job are DeepL Pro and Google Cloud Translation API. But picking the right engine is only half the battle.

The markup translation tax

The markup translation tax is the hidden operational cost of manually fixing broken HTML tags and formatting errors after a basic AI tool translates your product catalogue.

Every e-commerce platform stores product descriptions as HTML. When your copywriter bolded a feature or added a bulleted list in Shopify, the database saved <strong> and <ul> tags. If you extract that raw data and feed it into a standard consumer translation tool, the tool does not know what those tags mean.

It either translates the English tags into literal German words, or it strips them out entirely. The result is a mess. Your beautifully formatted product page arrives in the German storefront as a single, unreadable block of text.

Your accounts assistant then spends three weeks manually re-adding the bold text, bullet points, and hyperlink structures. They are guessing where the formatting should go because they do not speak German. They cross-reference the English site, find the third bullet point, and try to match it to the third sentence in the German text block. This is the markup translation tax.

It affects every SME that tries to localise their website on the cheap. It persists because founders view translation as a straightforward text-in, text-out process. They ignore the structural layer.

You are paying a human a £30k salary to do data entry that a properly configured machine translation API handles natively. When you expand into Europe, the volume of text multiplies.

A catalogue of 4,000 SKUs across three new languages is 12,000 descriptions. You cannot brute-force that with manual formatting checks. You need a system that respects the code while translating the words.

Why the obvious fix fails

Most SMEs try to fix this by wiring Zapier directly into a standard ChatGPT API prompt, or they install a generic £15-a-month Shopify translation app.

The logic seems sound. You have a ChatGPT Plus subscription, and it speaks perfect French. Why not just ask it to translate the product descriptions?

Here is what actually happens. Large Language Models are generative. They are designed to predict the next word and be creative. If you ask an LLM to translate a technical description for a waterproof jacket, it will try to improve the copy.

It will invent new adjectives. It will hallucinate features that your jacket does not have. You do not want creativity in your product database. You want exact, high-fidelity machine translation.

In my experience, relying on a basic Zapier flow to push text to OpenAI for bulk translation introduces a 5% to 10% hallucination rate. When you are translating 4,000 products, that means 400 of your listings now contain fabricated information.

If a customer in Berlin buys a jacket because the AI hallucinated the word "Gore-Tex", you are paying for that return. You also risk damaging your brand reputation in a new market before you even establish a foothold.

The generic Shopify apps are equally flawed. They use the cheapest possible routing under the hood. They do not let you set custom brand glossaries.

If your brand uses the term "Drop Stitch" for paddleboards, a generic app will translate it literally into French. Your highly technical sporting equipment suddenly sounds like a sewing kit, entirely confusing your buyers.

You need a dedicated machine translation engine. DeepL Pro and Google Cloud Translation API are built specifically for this. They are deterministic. They do not invent facts.

But simply buying access to these APIs is not enough. If you just dump your raw text into their standard endpoints without configuring the payload, you will hit the exact same formatting walls. You have to use their advanced features.

The approach that actually works

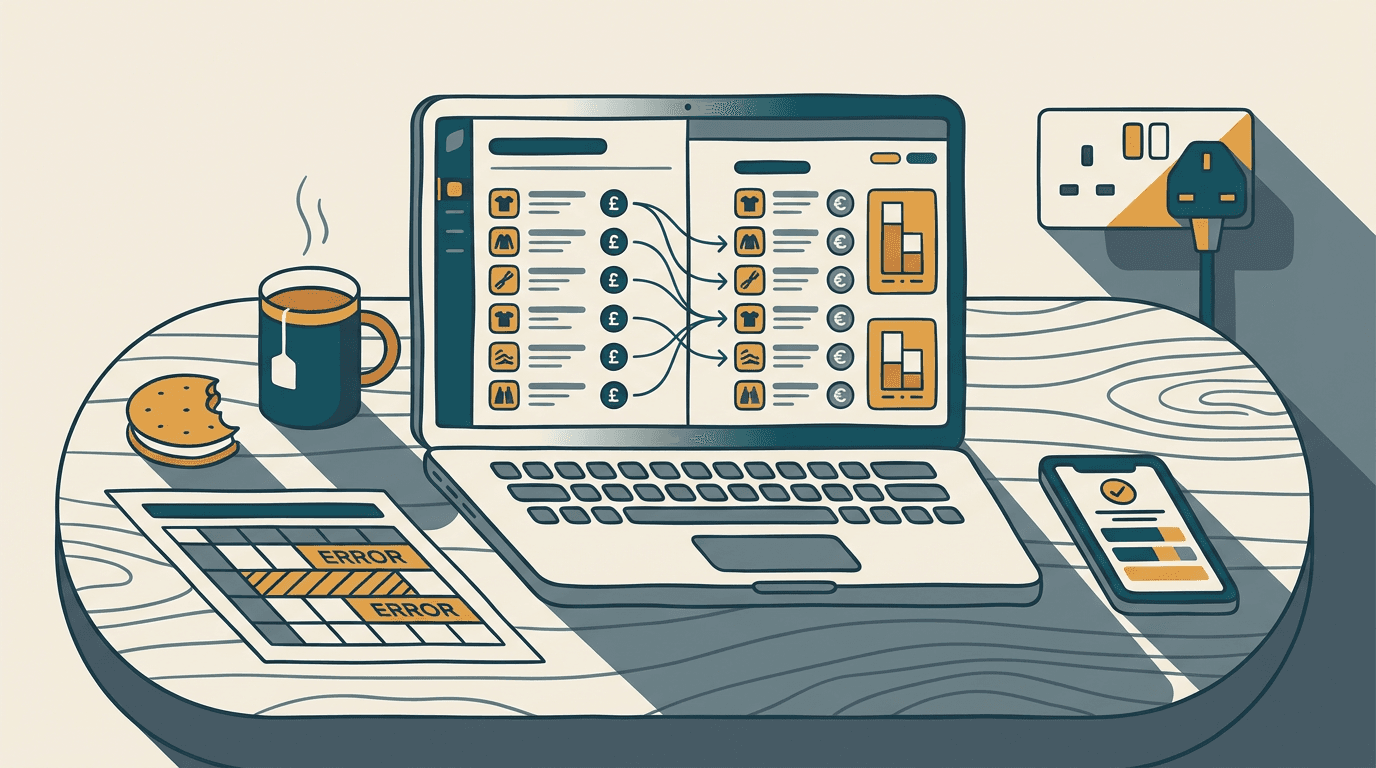

A technical architecture showing Shopify webhooks passing through Make to the DeepL API, using specific HTML tag handling parameters.

The correct way to localise your catalogue is to build a middleware pipeline that extracts the data, protects the HTML, applies a brand glossary, and syncs it back.

I recommend using Make to orchestrate this. Make pulls the product JSON from your Shopify store. The payload includes the title, the HTML description, and the metadata. Make then routes this data to DeepL API Pro.

DeepL Pro is the premier engine for European languages. It costs a base of $5.49 a month plus $25 per million characters. For an SME catalogue of this size, your API cost will be under £100.

DeepL has a specific parameter for handling markup. In your API call, you set tag_handling="html". This tells the engine to translate the text but leave the HTML tags exactly where they belong. The <strong> tags wrap around the correct translated words.

You also deploy a custom glossary. You upload a CSV file to DeepL containing your locked brand terms. If you sell "Navy Blue" boots, the glossary forces the API to keep the specific industry translation, rather than a literal translation.

Make sends the glossary ID alongside the HTML payload. It also specifies the source and target languages to prevent the API from guessing. DeepL processes the request and returns perfectly formatted, accurately translated HTML.

Make then uses an HTTP PATCH request to update the Shopify product via the GraphQL API, dropping the new language directly into your European storefront.

The economics make sense. DeepL's own Forrester study shows a 345% ROI for global enterprises using their advanced API [source](https://www.deepl.com/en/blog/forrester-tei-study-roi). For an SME, the setup takes 1-2 weeks of build time. Expect to spend £4k-£8k on the automation build, depending on how messy your current Shopify data is.

The main failure mode here is dirty source data. If your English descriptions have broken HTML tags to begin with, perhaps a missing closing </div> from a careless copy-paste job, the API will fail to parse them.

You catch this by adding an error-handling route in Make. If DeepL returns a 400 error, Make routes that specific SKU to a Slack channel for human review, while the rest of the catalogue continues translating.

Where this breaks down

This DeepL pipeline is incredibly effective for expanding into France, Germany, Spain, and Italy. But it has limits.

If you are expanding into the Middle East or Asia, DeepL is not the right tool. DeepL focuses its training data heavily on European languages.

For Arabic, Japanese, or Korean, Google Cloud Translation API Advanced is the better engine. Google charges $20 per million characters and handles right-to-left formatting natively. It also supports over a hundred languages, whereas DeepL is much more selective.

This approach also breaks down on user-generated content. If you try to run thousands of customer reviews through a strict glossary and HTML parser, it fails.

Reviews are full of typos, slang, and emojis. DeepL tries to make them sound professional, which ruins the authentic tone of a review. Google Cloud Translation API is far better at handling raw, messy text without over-correcting it.

Finally, if your source product data lives in a legacy ERP system that exports descriptions as flat text files with no structure, the API cannot save you. You have to structure your English data before you can automate your German data.

The question isn't whether you should translate your website. It is whether you are building a system that scales or a system that requires a human to check every single paragraph. Pushing buttons in a free web tool feels like progress until you have to update your entire winter product line in three languages by tomorrow morning. A properly configured API pipeline removes the friction from European expansion. It turns translation from a massive operational headache into a quiet background process. You stop worrying about broken bold tags and mistranslated brand names. You start focusing on the actual logistics of getting your products across the border.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.