The Context Translation Gap and Why AI Coding Agents Fail

You are sitting in a Tuesday morning product meeting. Your ops manager is explaining that the new Shopify integration needs to handle split shipments, but only for EU customers, and only if the backorder delay is over three days.

She draws a flowchart on the whiteboard that makes perfect sense to the humans in the room. You watch your lead developer nod, already translating that messy, conditional business logic into strict database schemas and API payloads in his head.

Right now, your LinkedIn feed is flooded with videos of autonomous agents building Pong in ten minutes. The hype is deafening. But building a self-contained game in a vacuum is a parlour trick. Integrating a new shipping rule into a five-year-old custom ERP without breaking the finance team's month-end reporting is actual software engineering.

The context translation gap

The context translation gap is the invisible layer of work where messy business reality is converted into strict technical constraints. It's the hardest part of software engineering, and it's entirely invisible to anyone who doesn't write code for a living.

When a founder asks for a new feature, they are speaking in shorthand. They say they want a simple dashboard to track supplier delays. They don't specify how the system should handle a supplier who changes their name mid-month. They don't mention what happens to the historical data when a product SKU is retired. They assume the system will just know.

A human developer instinctively knows to ask those questions. They look at the existing PostgreSQL database, see the legacy constraints, and realise the simple dashboard requires a fundamental rewrite of the inventory table. They bridge the gap between human ambiguity and machine precision.

This translation process happens in every single sprint planning meeting across the UK. A sales rep asks for a button in Pipedrive to automatically generate a custom PDF proposal. The developer has to figure out what happens if the client has no registered address, or if the pricing tier includes a grandfathered discount code from 2022.

AI tools don't bridge this gap. They assume the prompt is complete. If you ask an AI to build a dashboard, it will write the code for exactly what you asked, ignoring the unstated edge cases that will inevitably crash the system on day three. The gap remains unfilled. It's a mess. Nobody knows why. End of.

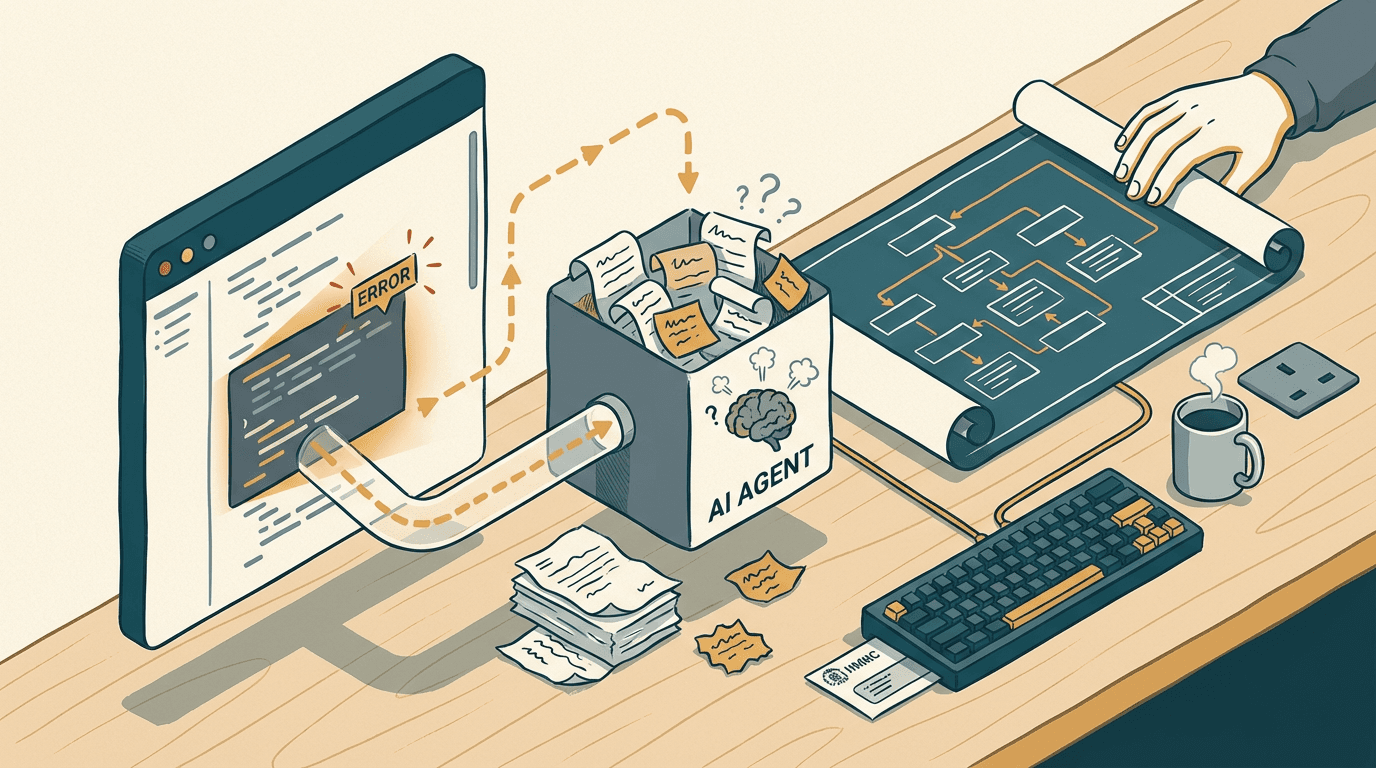

Why autonomous coding agents hit a wall

Autonomous coding agents hit a wall because they write exactly the code you ask for, ignoring the unstated business context that keeps your system running. The obvious fix most SMEs try is handing a raw product roadmap directly to an off-the-shelf SaaS agent, expecting it to behave like a senior engineer.

You've probably seen the headlines. A recent piece in PCMag highlighted how Cognition Labs' Devin AI can code websites by itself, train other AIs, and complete jobs on Upwork without human intervention [source](https://www.pcmag.com/news/this-software-engineer-ai-can-train-other-ais-code-websites-by-itself). The immediate reaction from founders is to assume they can fire their agency, cancel their junior developer hires, and just pay a monthly subscription for an AI worker.

Here is the contrarian truth: these autonomous agents don't fail because they write bad code. They fail because they write exactly the code you ask for.

When you point an agent at a codebase and ask it to add a new Stripe subscription tier, it will write the Python functions perfectly. But it lacks the context to know that your finance team relies on a custom webhook that triggers off the old tier names. The agent ships the code, the tests pass, and your month-end reconciliation silently breaks. And yes, that's annoying.

In my experience reviewing dozens of SME codebases, writing the syntax is only about 20% of the job. The other 80% is defensive architecture. It's knowing which parts of the legacy system are fragile. It's understanding why a specific API call was written a certain way three years ago to bypass a bug in a third-party logistics provider's system.

Because of the context translation gap, an AI agent treats every task as a greenfield project. It lacks the institutional memory required to navigate a mature, messy codebase. It will blindly overwrite a critical workaround because the workaround looks like inefficient code.

You save £35k on a junior salary, but you introduce a silent, structural risk that takes a senior developer three weeks to untangle when it finally snaps. The agent doesn't push back on bad ideas. It just executes them flawlessly, driving your architecture straight off a cliff.

The hybrid architecture approach

The hybrid architecture approach treats AI as a high-speed logic engine constrained by human-designed safety nets. You don't let the AI make structural decisions. You let it execute specific, tightly bounded tasks within a system designed by a human engineer.

Take a worked example: processing complex supplier invoices.

Instead of asking an AI agent to build the whole system, your human developer designs the pipeline. They set up an n8n webhook to catch incoming emails from Outlook. The webhook strips the PDF attachment and sends it to a Claude API endpoint.

Pay attention to this part. The developer doesn't just ask Claude to extract the data. They pass a strict JSON schema in the API call, forcing Claude to return exactly the fields Xero needs: supplier name, line item descriptions, tax codes, and amounts.

The human developer writes the logic that says: if the JSON payload is missing a tax code, don't guess. Route it to a Slack channel for human review. If the payload is perfect, the n8n workflow PATCHes the Xero invoice line items directly via the Xero API.

The AI is doing the heavy lifting of unstructured data extraction, but the human engineer owns the routing, the error handling, and the database writes. They dictate the exact shape the data must take before it is allowed to touch the core financial system.

Building this hybrid pipeline usually takes 2-3 weeks of build time, costing roughly £6k-£12k depending on how messy your existing integrations are.

The known failure mode here is hallucinated schema changes. Sometimes, an LLM will decide to return a string instead of an integer for a price column. If you let that hit your database, it dies.

You catch this by putting a strict validation layer between the AI output and the database write. If the validation fails, the webhook skips the write and flags the error. The human developer builds the safety net; the AI just performs the trick.

Where the hybrid model breaks down

The hybrid model breaks down when you try to layer modern AI extraction over fundamentally broken legacy processes or dirty data. This approach is powerful, but it requires a baseline of digital maturity. You need to know exactly where the boundaries of your system lie before you start plumbing AI into it.

If your core business logic lives in a 15-year-old on-premise ERP that only accepts SOAP XML requests, adding an AI extraction layer is going to cause more pain than it solves. The modern API tools will constantly time out trying to talk to the legacy server. You will spend more time debugging network protocols than writing useful business logic.

Similarly, if your inputs are fundamentally broken, the AI will confidently generate broken outputs. If your invoices come in as scanned TIFFs from a legacy accounting system, you need a dedicated OCR layer first. If you skip that and feed blurry images directly to a vision model, the error rate jumps from 1% to around 12%.

You have to check your data hygiene before committing to a build. If your human team can't agree on the standard operating procedure for handling a split shipment, an AI model can't automate it. The AI doesn't fix bad processes. It just executes them at light speed. If the underlying logic is flawed, the automation will only scale the chaos.

The question isn't whether AI will eventually write better Python than your lead developer. It already does. The question is whether an autonomous agent knows that your operations manager relies on a weirdly formatted CSV export every afternoon, and that changing the database schema to be "more efficient" will completely break her workflow. Software engineering isn't about typing code into a terminal. It's about mapping human intent onto rigid digital structures without breaking the things that already work. You still need human developers because somebody has to hold the context. Somebody has to know where the bodies are buried in the legacy codebase. You can use AI to write the boilerplate, parse the messy inputs, and speed up the build cycle. But once you hand over the architectural keys to a bot, you aren't building a business system. You are just waiting for the inevitable silent failure.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.