Fixing the synthetic content tax after Google's March core update

You open Google Analytics and the traffic chart looks like a cliff edge. March 2024 hit, and suddenly your top-performing pages vanished from the search results. For the last six months, your marketing team had been feeding ChatGPT a list of keywords, generating endless articles, and publishing them directly to your company blog. It felt like a cheat code. You were shipping content at ten times the speed for a fraction of the cost.

Now, organic leads have dried up. The phone isn't ringing. You are staring at the dashboard wondering if Google has blacklisted your domain forever. The quick SEO wins you celebrated in January are actively destroying your business in April. Here is what actually happens when you try to automate your way to the top of the search results, and how to fix the damage.

The synthetic content tax

The synthetic content tax is the permanent algorithmic penalty your domain suffers when you publish mass-produced, unedited AI articles. Google's March 2024 core update was a targeted strike against scaled content abuse, updating their spam policies to penalise sites producing high volumes of low-quality material (https://developers.google.com/search/blog/2024/03/core-update-spam-policies). AI simply made it cheaper than ever to create that spam.

SMEs thought they were outsmarting the system. They used off-the-shelf tools to spin up hundreds of blog posts targeting niche long-tail keywords. The logic made sense on paper. More pages equal more chances to rank. You blanket the internet with your service pages and wait for the traffic to roll in.

But Google's algorithms adapted. They do not just look at keywords anymore. They look at user signals, bounce rates, and the actual information gain of the text. When your article on commercial plumbing regulations reads exactly like the top three results but with more adjectives, it offers zero new value. It is digital pollution.

The penalty is severe. Some sites lost 80% to 100% of their organic traffic overnight (https://seo.ai/blog/google-march-2024-core-spam-update-is-heavily-affecting-some-websites). Once you trigger this algorithmic demotion, recovering your previous rankings takes months of painful cleanup. You cannot just delete the worst posts and expect your traffic to bounce back the next day. The trust in your domain is broken.

Why rewriting tools fail

Rewriting AI text with paraphrasing tools fails because search algorithms penalise the absence of original insight, not the choice of vocabulary. The immediate reaction to a traffic drop is panic. The second reaction is looking for a technical loophole.

In my experience reviewing search consoles for B2B firms, the pattern is always the same. Marketing teams scramble to run their existing AI content through tools like Undetectable AI or QuillBot. They prompt ChatGPT to write with more burstiness and perplexity. They think the problem is that Google detected a specific robotic phrasing.

That is a fundamental misunderstanding of how the algorithm works. Google does not care if you use the word delve or tapestry. They care that your 2,000-word guide on R&D tax credits is a generic summary of the HMRC website with no unique data, no expert quotes, and no practical examples.

The standard SME marketing playbook is fundamentally broken here. You hire a junior marketing assistant. You give them a £20 ChatGPT Plus subscription. You tell them to write three blog posts a week. They prompt the AI, copy the output, maybe change a few headers, and hit publish.

This creates a negative feedback loop. The junior team member lacks the domain expertise to know when the AI is hallucinating or stating the obvious. The resulting content is structurally identical to every other lazy competitor in your space. You are polluting your own site architecture.

The contrarian truth is that AI cannot generate thought leadership. It is physically impossible. A large language model predicts the most statistically probable next word based on existing training data. By definition, its output is the average of what already exists. If your SEO strategy relies on publishing average thoughts, you will lose.

Capturing raw internal knowledge

Capturing raw internal knowledge and structuring it with automation is the only reliable way to produce content that ranks. You cannot replace a £40k marketing manager with a £20 ChatGPT subscription. But you can use automation to scale the reach of your actual experts.

The goal is to extract the deep, hard-won knowledge from your MD, your lead engineer, or your head of sales, and turn that into content Google actually wants to rank. This is how you build a system that survives algorithm updates.

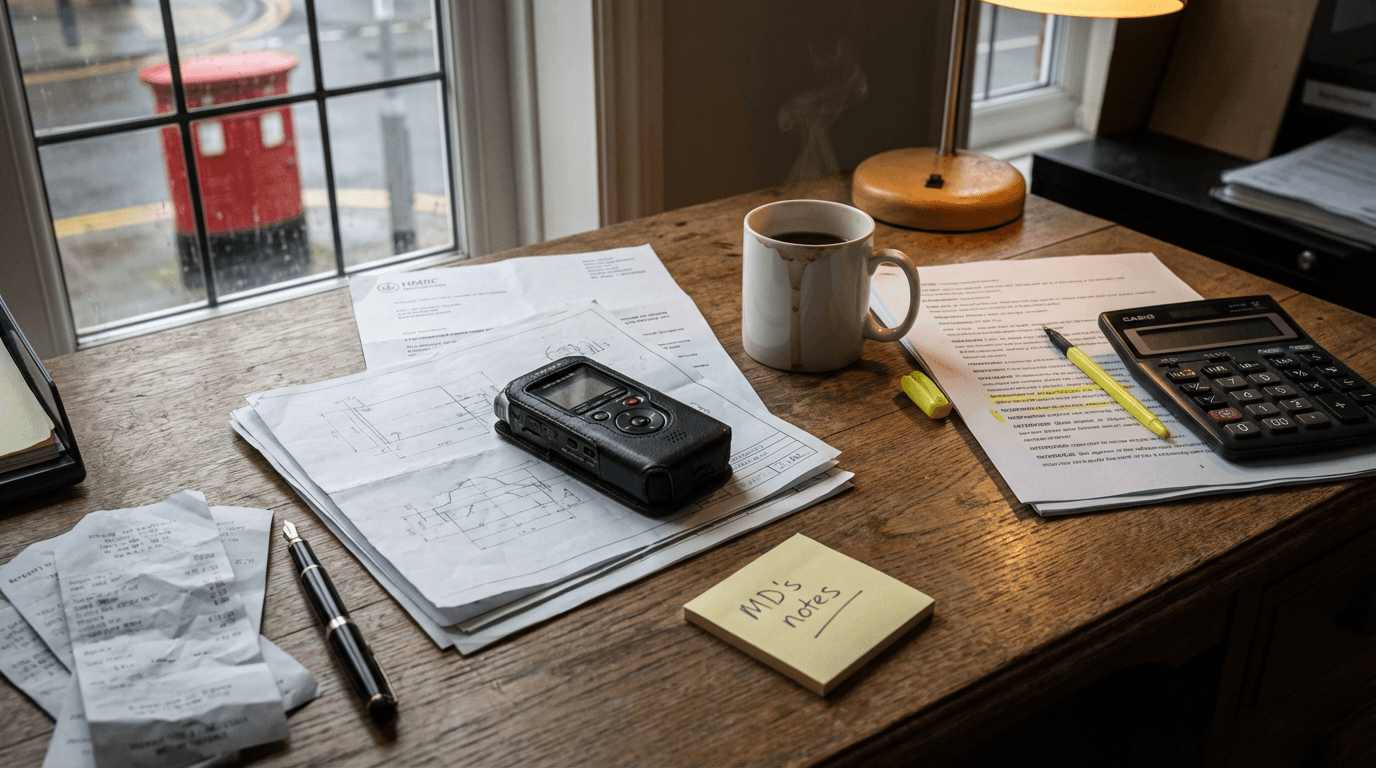

You start with the raw input. Take a standard IT managed service provider. When your lead engineer solves a complex Azure active directory sync issue for a client, they record a three-minute voice note on their phone detailing the exact fix. They send that audio file to a dedicated Slack channel.

This is where the automation takes over. You use Make to watch that specific Slack channel. When a new audio file lands, Make downloads it and sends it to the OpenAI Whisper API for transcription.

The raw transcript is a mess. It is full of jargon, pauses, and half-finished thoughts. Make then passes that text to the Claude API. You use a strict system prompt. You tell Claude to act as a technical editor. You instruct it to extract the core problem, the specific mechanism of the solution, and the business impact. You force it to ignore external fluff.

Crucially, you demand the output as a structured JSON object containing a title, an executive summary, and three detailed body paragraphs. Make receives that JSON payload and pushes it directly into a draft status in your WordPress CMS. It then sends a Slack notification back to the marketing team with a link to the draft.

The marketing team now has a highly specific, expert-led article draft. It contains real world examples. It has proprietary data. It just needs a human polish, formatting, and publishing.

Building this pipeline takes roughly two to three weeks. The software costs are negligible. Make is £15 a month, and API calls are pennies. The real cost is the setup time, usually around £4k to £8k if you hire a specialist to build the webhooks and prompt chains properly. The result is content that search engines reward because it is genuinely unique.

Where the capture process breaks

The knowledge capture process fails entirely if your senior staff experience any friction when recording their insights. You can build the most elegant automation in the world. It means nothing if your team refuses to use it.

If you ask an operations director to log into a Notion portal, fill out a brief, and upload a specific file type, the system dies on day one. They are too busy. The input mechanism has to live where they already work. A quick Slack voice note or an email forward is the absolute limit of acceptable friction.

Audio quality is another hard limit. If your field engineers are recording voice notes on a windy building site or a noisy factory floor, the Whisper API fails. The transcription error rate jumps from 1% to over 15%. When Claude receives a garbled transcript, it hallucinates the technical details to fill the gaps. You end up publishing factually incorrect engineering advice. You need a relatively clean audio environment for the transcription step to work.

Finally, this fails if your business lacks actual differentiation. If you sell generic white-label products, your team does not have unique insights to share. You cannot extract expertise that does not exist.

What to do now

You need to clean up your site architecture and start extracting real insights. Stop running your existing spam through rewriting tools. It is a waste of time. Here is how you start fixing the damage today.

- Audit your indexed pages in Google Search Console. Open your performance report and filter for pages that lost more than 50% of their traffic since March 2024. These are the pages Google has identified as low value.

- Consolidate or delete the weak content. If you have ten generic AI articles about different types of commercial boilers, merge them into one comprehensive, human-edited guide. Set up 301 redirects for the deleted URLs. You need to show search engines you are actively removing the clutter.

- Set up a dedicated knowledge capture channel. Open Slack or Microsoft Teams and create a channel called Raw Insights. Instruct your senior staff to drop a two-minute voice note in there every time they solve a difficult client problem. Do not ask them to write anything down. Just capture the audio.

- Manually test the extraction process. Before paying for a complex Make integration, download one of those voice notes. Run it through a free transcription tool. Paste the text into Claude with a prompt asking for a structured blog outline. If the output is better than what your junior marketer writes, you are ready to automate the pipeline.

Stop paying the synthetic content tax. Build a system that scales your actual expertise.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.