Eliminating the Unstructured Data Tax with Vision-First Extraction Pipelines

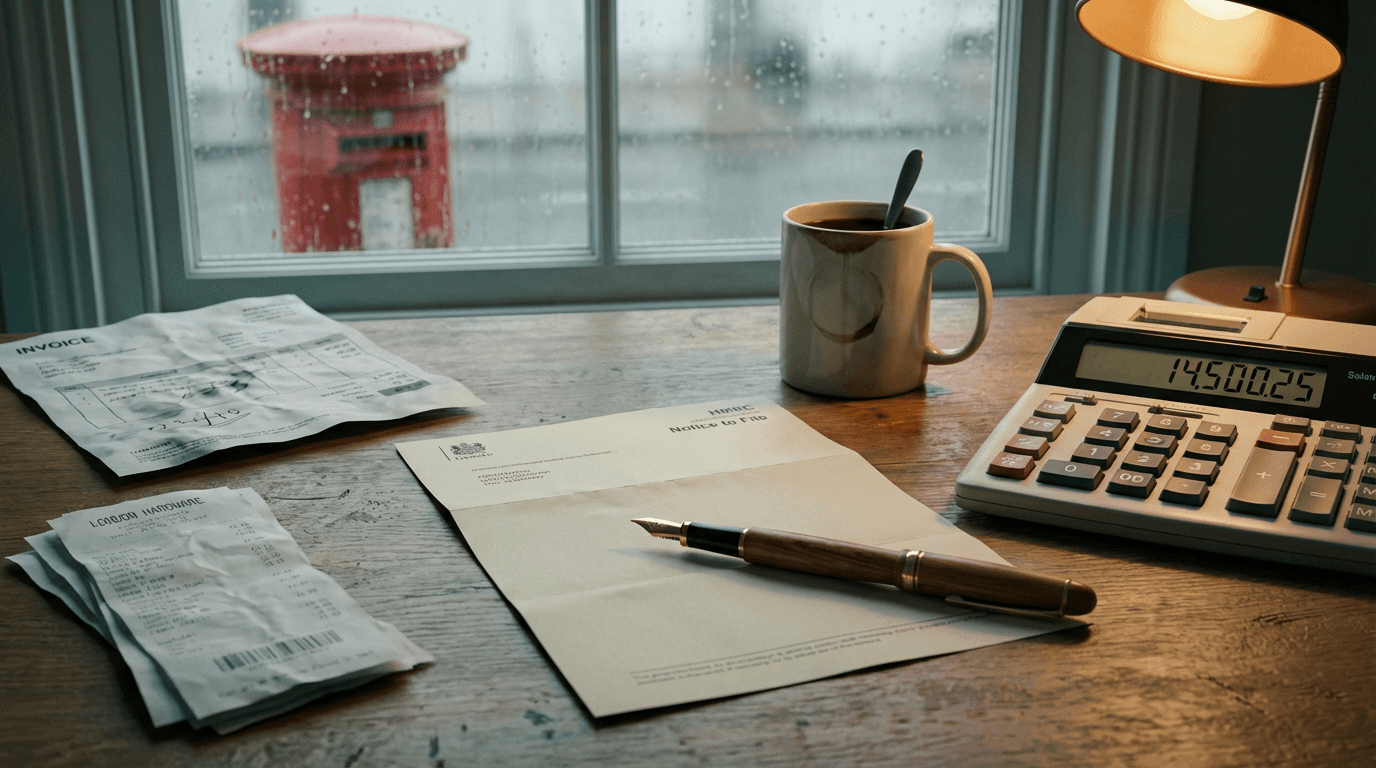

You walk into the finance office on a rainy afternoon. The accounts assistant has a 14-page PDF from a logistics supplier open on the left monitor. On the right, Xero. They are manually typing in 42 separate line items. The supplier changed their invoice layout last month. The tables now span across page breaks. The legacy OCR software, which costs £400 a month, just spits out a block of unformatted text. So a human being, paid £30,000 a year, is doing data entry. It is a slow, expensive leak of human potential.

You see this in almost every business moving physical goods or managing complex supply chains. Paperwork is the friction that slows everything down. It's a mess. Nobody knows why we accept it. End of.

The unstructured data tax

The unstructured data tax is the hidden payroll cost of humans manually translating messy, unpredictable documents into structured database fields. It happens every time an invoice, a customs declaration, or a handwritten delivery note arrives in a format your software cannot read.

Most businesses accept this as a cost of doing business. They buy tools like Dext, Hubdoc, or AutoEntry. Those tools work perfectly for standard receipts and simple utility bills.

Once a supplier adds a custom column, or writes a lot number in pen across the margin, the system chokes. The software flags it for human review. The human opens the file, finds the missing text, and types it into Xero or QuickBooks.

You pay for software to automate the work, and then pay a human to finish it. And yes, that's annoying.

This tax scales linearly with your growth. Double your order volume, and you double the number of exceptions. You end up hiring junior staff just to feed the machine. It is a structural bottleneck that prevents a £5M business from scaling to £10M without bloating the back office.

The unstructured data tax forces your most capable people to act like human routers. They stop doing financial analysis. They stop chasing bad debt. They just move text from a PDF into a web form. It is the most common operational ceiling SMEs hit.

Why the chatbot approach fails

Replacing legacy OCR with basic ChatGPT subscriptions fails because consumer AI interfaces cannot reliably map complex document structures into rigid accounting software fields. The obvious fix is usually a knee-jerk reaction. A founder sees a demo of ChatGPT extracting text from an image and buys 10 seats for the team.

Or they try to build a Zapier flow. They route Gmail attachments to a basic text extractor, push the text to OpenAI, and try to create a Xero invoice.

I see this pattern constantly. It works beautifully on the first test with a clean, single-page invoice. It fails catastrophically in production when reality hits.

Here is the exact mechanism of failure. Zapier's native text extraction strips out spatial relationships. When you feed a complex PDF to a basic parser, it reads left to right, top to bottom. It loses the table structure entirely. It can't tell if a number belongs to the 'Unit Price' column or the 'Quantity' column.

The LLM receives a wall of jumbled text and guesses. Sometimes it guesses wrong. Zapier's standard integration steps often fail to handle nested JSON objects. So when Xero requires a line item assigned to a tracking category two levels deep, the automation silently writes a null value. It might map a £500 unit price as a quantity of 500.

Zapier pushes the incorrect value into Xero. You only notice the error at month-end reconciliation. You have traded a slow manual process for a fast, automated disaster.

A £25/month ChatGPT subscription cannot replace a £35k salary, and here's the mechanism: chatbots require human prompting for every single file. If your staff have to download a PDF from Outlook, upload it to a chat window, copy the output, and paste it into Xero, you haven't automated anything. You have just added more steps to the unstructured data tax.

The vision-first extraction pipeline

The reliable way to process messy paperwork is to bypass traditional text extraction entirely and use Anthropic's Claude 3 vision models to read documents directly as images, forcing the output into a strict JSON schema. This is not OCR. This is visual reasoning.

According to Anthropic's release data, the Claude 3 family, specifically Claude 3 Opus and Sonnet, has near-human levels of visual comprehension. They don't just read text. They understand layout, borders, and handwritten notes.

Here is what an actual production build looks like. An email lands in a shared Outlook inbox with the subject line 'Invoice 4092 - Freight Charges'. It contains a PDF attachment from a difficult logistics supplier. An n8n webhook triggers. It doesn't try to parse the PDF text. Instead, it converts the PDF pages into high-resolution images.

n8n sends those images to the Claude 3 API via a prompt that includes a strict JSON schema. You tell Claude exactly what fields you need: InvoiceNumber, Date, TotalAmount, and an array of LineItems containing Description, Quantity, and TaxRate. You instruct it to return only valid JSON.

Claude looks at the image. It sees the table spanning two pages. It reads the handwritten 'discount applied' note. It maps everything perfectly into the JSON structure.

n8n catches that JSON. It parses the payload and loops through the line items to POST them directly to the Xero API. No human touches the file.

Pay attention to this part. The magic happens because you are not asking Claude to write text. You force it to populate a data structure. You use a tool like Make or n8n to enforce that structure. If Claude returns a string where Xero expects an integer, the webhook catches it, fails gracefully, and alerts the ops manager.

This pipeline handles multi-page documents effortlessly. Claude 3 can process dozens of images in a single API call. It maintains the context across pages. When an invoice table cuts off on page one and resumes on page two, the model understands it is the same table. Legacy OCR fails at this exact hurdle every time.

You also build in a safety net. If Claude's confidence is low, or if the total amount doesn't match the sum of the line items, n8n routes the JSON to a Slack channel for human approval. The human clicks a button in Slack, and the flow continues. You handle exceptions, not data entry.

A system like this takes about two to three weeks to build and test. You should expect to spend between £6,000 and £12,000 depending on the complexity of your existing integrations. The running cost is just API calls. A few pence per document. It completely eliminates the unstructured data tax.

Where vision models break down

Vision-based extraction breaks down when you feed it severely degraded scans or rely on it for complex accounting logic that requires external context. This is not a magic wand. You need to know the limits before you rip out your existing systems.

If your invoices come in as low-resolution, black-and-white TIFF files from a 15-year-old warehouse scanner, you need OCR first, and the error rate jumps from 1% to ~12%. Claude 3 is excellent, but it can't read pixels that aren't there. If the human eye can't read the blurry text, the AI will hallucinate a guess.

It also fails if the document lacks explicit data. Suppose your supplier uses internal part numbers. Your finance team usually cross-references those numbers against a master spreadsheet to find the correct Xero nominal code. Claude can't do that from the image alone.

You have to build that lookup step into your n8n workflow. Don't ask the LLM to guess nominal codes. Ask it to extract the part number, then use a database node in n8n to query Airtable or Supabase for the correct code.

Keep the AI doing visual extraction. Keep your deterministic logic in your database. Mixing the two is how you build fragile systems.

Three questions to sit with

It is easy to look at the current crop of AI tools and assume they are just toys for writing marketing copy. But the underlying models have crossed a threshold. They can now do the heavy lifting of back-office operations. You just have to wire them up correctly.

Before you renew your legacy OCR contract, or hire another junior assistant to type data into Xero, take a hard look at your current processes.

- Are your highly paid finance staff spending more than five hours a week manually translating data from complex PDFs into your accounting software, simply because your current tools can't handle custom table layouts?

- When a key supplier changes their invoice format, does your automation silently break, push null values into your database, and require a manual reconciliation effort at month-end to fix the mess?

- Do you have a clear, enforced boundary in your tech stack between where probabilistic AI data extraction ends and where your deterministic, rule-based business logic begins?

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.