Eliminating the Dead-Data Tax in B2B Sales Automation

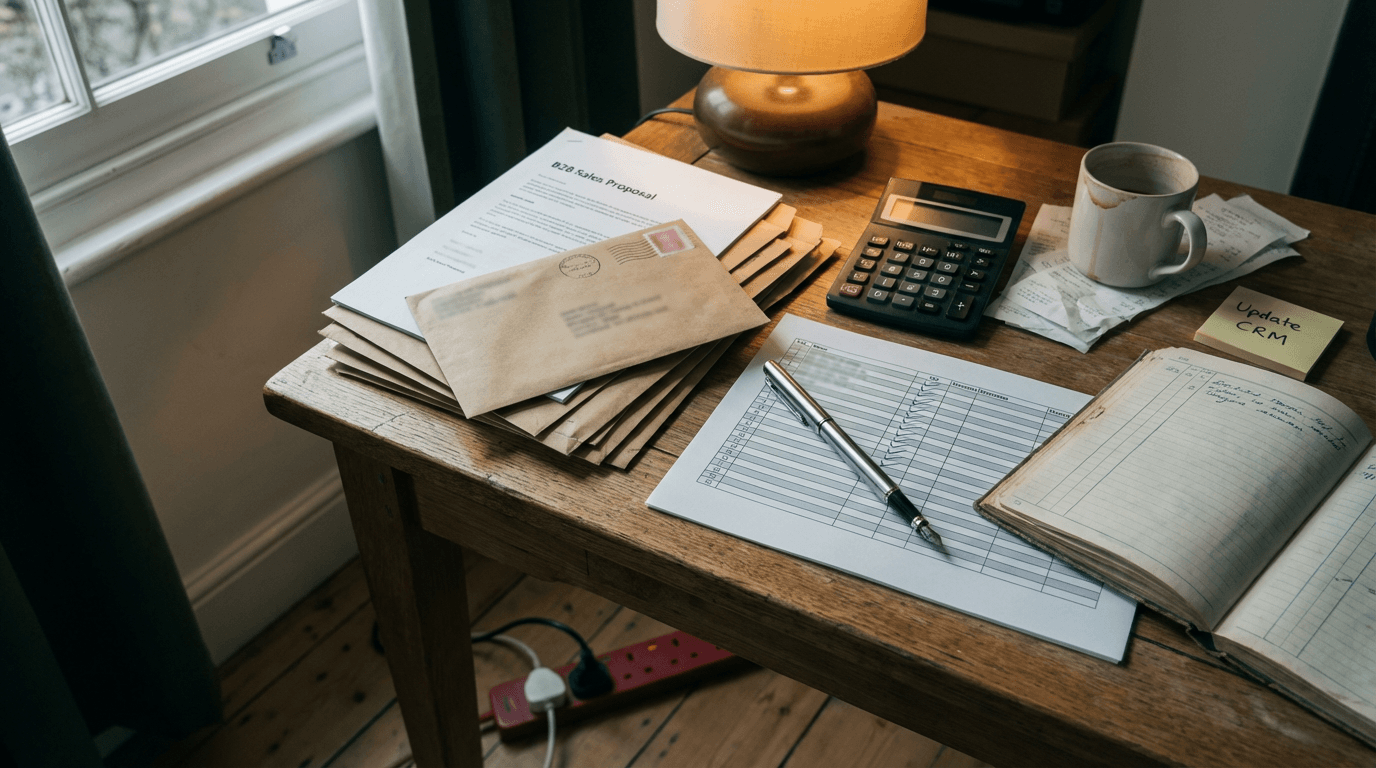

Your senior sales rep is currently staring at a thread of 14 emails. She's downloading a PDF specification sheet, opening HubSpot in another tab, and manually typing line items from one window into the other.

She earns £45k a year. You're paying her to be a human API. It's a terrible use of her brain. You know this, she knows this, and you've probably tried to automate it twice already.

The pattern I keep seeing is that SMEs throw a patchwork of basic AI subscriptions at their sales admin and expect magic. The magic never arrives.

Instead, you get duplicated CRM records, hallucinated quotes sent to clients, and a sales team that quietly goes back to manual data entry because the bot can't be trusted. It's a mess. Nobody knows why it fails, so they blame the technology and go back to typing.

The dead-data tax

The dead-data tax is the hidden cost of paying your sales team to read unstructured messages and copy the details into structured CRM fields. It happens every time a buyer replies to a quote with a messy, multi-part request.

B2B sales don't happen in neat web forms. Buyers send weird PDFs. They reply to old email chains with new product requirements. They use vague language.

Because the input is chaotic, standard software can't parse it. So you put a human in the middle. The human reads the chaos, translates it into a rigid format, and pastes it into Pipedrive or HubSpot.

This tax scales linearly with your growth. If you double your inbound volume, you double the hours spent on admin. It caps your revenue per employee.

You end up hiring junior analysts just to read inboxes and update deal stages. Your best closers spend half their week doing the exact same data entry as your newest hires.

Most founders ignore this tax because they think it's just the cost of doing business. They assume that if a task requires reading comprehension, a human must do it. That assumption was true three years ago. It's no longer true today.

But trying to eliminate this tax with the wrong tools will just create a different, much louder problem. The friction is structural, and you can't fix it by just buying another software license. You have to change how the data moves.

Why the obvious fix fails

The obvious fix is bolting ChatGPT Plus onto Zapier or turning on Google Workspace Studio agents, and it fails because standard automation tools can't handle nested conditional logic without breaking.

Look at Zapier first. Zapier relies on strict key-value pairs. When a buyer emails, "We need 50 of the blue widgets, but only if they ship by the 12th, otherwise just send 30 of the red", a basic Zapier parser chokes.

It can't hold the conditional logic. Zapier's Find steps can't nest deeply enough to cross-reference your inventory, so it maps a null value to your CRM. You only notice at the end of the month when the pipeline data is entirely wrong. And yes, that's annoying.

Then there's Google Workspace Studio. In December 2025, Google rolled out massive updates, making Gemini 3 Flash the default engine across their products (https://blog.google/technology/ai/google-ai-updates-december-2025/). The native agent builder inside Workspace looks incredible on a demo screen.

It's fast and deeply integrated into Gmail and Google Docs. But here's the exact mechanism where it falls over. Workspace Studio agents are built for the Google walled garden.

They read a Gmail thread perfectly. But when you need that agent to send a PATCH request to a custom field in Pipedrive, the agent builder abstracts away the API error handling.

If Pipedrive rejects the payload because a date format is wrong, the Google agent doesn't know how to fix it. It just stops. It silently fails.

Your sales rep thinks the CRM is updated, the proposal never gets drafted, and the client goes cold. You can't build a reliable sales machine on a tool that gives up the second an API throws a 400 Bad Request.

Across my recent system audits, this silent failure mode is the number one reason sales teams abandon AI tools.

The approach that actually works

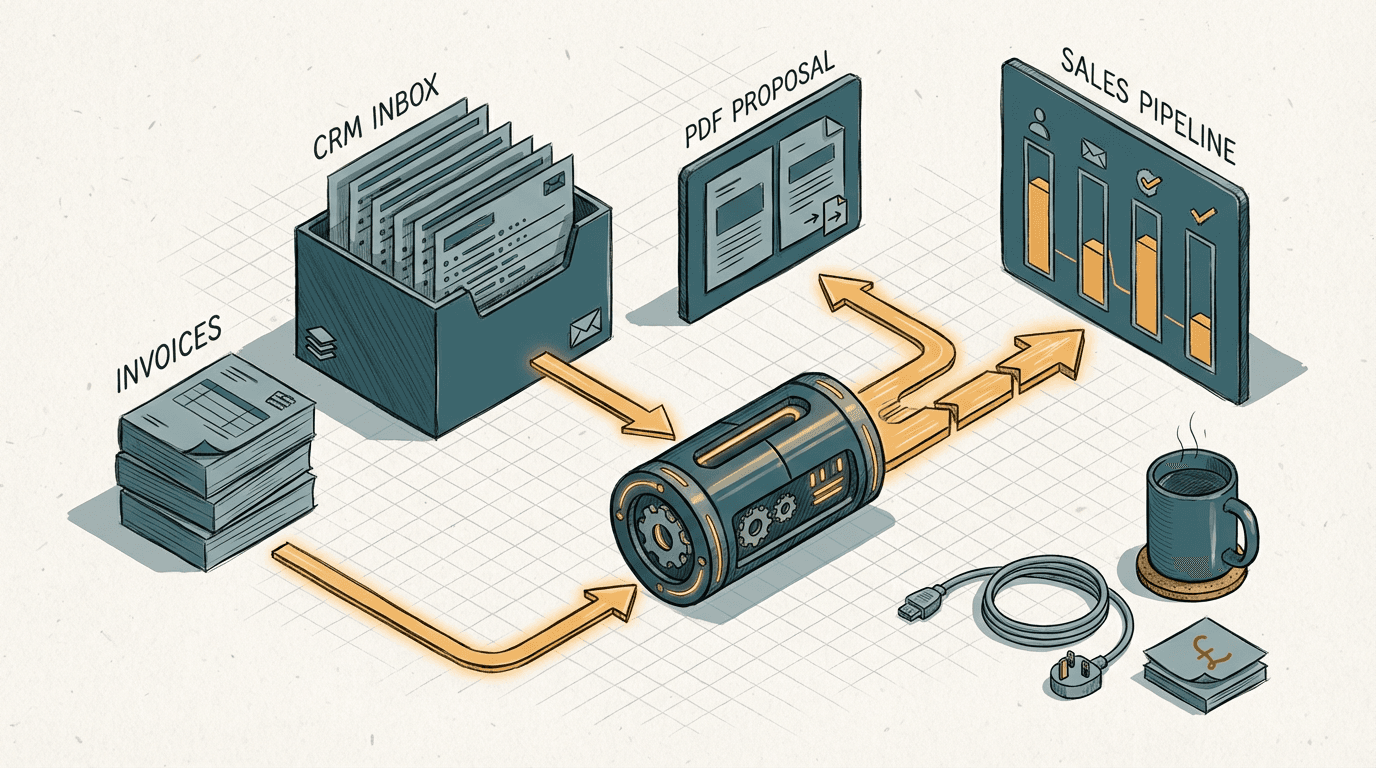

A self-correcting n8n workflow using GPT-5.2 to cross-reference CRM data and autonomously resolve API formatting errors without human intervention.

The approach that actually works uses GPT-5.2's native tool-calling via n8n to parse the email, validate the CRM schema, and draft the proposal in one self-correcting loop.

OpenAI launched GPT-5.2 specifically to handle long-running, agentic workflows (https://openai.com/index/introducing-gpt-5-2). The difference between this model and its predecessors isn't just better writing. It's the ability to execute complex tool calls and recover from its own mistakes.

Here's what a real system looks like. A buyer emails your main sales inbox: "Can we get the usual order, but add 5 extra licenses for the new London office?"

An n8n webhook catches that email. It triggers an API call to GPT-5.2, passing along the email body and a strict JSON schema for your CRM.

GPT-5.2 doesn't just guess the answer. It uses a tool call to query HubSpot for the client's "usual order". It retrieves the JSON response, sees that the usual order is 20 licenses, and calculates the new total as 25.

Then, GPT-5.2 attempts to update the HubSpot deal. Pay attention to this part.

If HubSpot rejects the update because the "Office Location" field requires a specific dropdown ID instead of the word "London", GPT-5.2 reads the error message.

It queries the CRM for the valid dropdown IDs, finds the one for London, rewrites its own JSON payload, and submits it again. It loops until the system accepts the data.

Once the CRM is updated, the same n8n workflow triggers a document generator. It pulls the approved pricing, drafts a PDF proposal, and drops it into the sales rep's drafts folder in Outlook.

The rep doesn't type anything. They just review the draft and click send.

Building this takes about 2 to 3 weeks of dedicated work. You should expect to spend between £6k and £12k depending on how messy your existing HubSpot or Pipedrive architecture is.

The cost is entirely in the custom API mapping and the error-handling logic inside n8n.

You catch failures by piping every API error that GPT-5.2 can't resolve after three attempts directly into a dedicated Slack channel.

Your ops manager sees exactly which payload failed and why. The system tells you when it's confused, instead of quietly corrupting your database.

Where this breaks down

This breaks down when your inbound sales data relies on scanned legacy documents or handwritten purchase orders.

Before you start building self-correcting API loops, you need to audit how your buyers actually send you data.

If your clients are construction firms who send photographed, coffee-stained purchase orders as TIFF files, GPT-5.2's vision capabilities will struggle to extract the line items perfectly.

Once you add optical character recognition into the flow, the error rate jumps from a manageable 1% to something closer to 12%. An LLM can't self-correct a hallucinated quantity.

If the model reads a smudged "50" as an "80", it will confidently query your CRM, update the deal to 80 units, and draft a flawless proposal for the wrong amount.

You also can't run this approach if your CRM lacks a clean, well-documented API. If you run your sales pipeline on a niche, industry-specific desktop software from 2014, n8n can't talk to it.

You need accessible endpoints. If you lack those, you have to fix your foundational software stack before you even think about AI. Don't try to build smart agents on top of dead software. It always ends in tears.

The question isn't whether AI replaces your sales team. It's whether you know exactly how many hours your best closers waste acting as human routers for messy data. The dead-data tax is entirely optional now, provided you stop buying generic tools and start building resilient systems. A self-correcting loop in n8n powered by GPT-5.2 will handle the chaos of B2B communication, but only if you design it to expect failure and recover from it. Stop accepting silent errors in your pipeline. Stop paying £45k salaries for copy-pasting. Build the error handling, map your API endpoints properly, and give your team their brains back. The companies that ship this architecture first will simply outpace everyone else. End of.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.