Breaking the API Leakage Ceiling with Local Mac AI Clusters

You are sitting with your ops manager. She has a folder of 400 unredacted supplier contracts, complete with pricing tiers and penalty clauses. You want to extract the renewal dates and SLA terms into a Notion database.

You both know ChatGPT could do it in ten minutes.

You also know that pasting those PDFs into a web browser sends your exact margin structure to a server in California. So she does it by hand. It takes three weeks.

This is the reality of AI in UK SMEs right now. You are either fast and exposed, or secure and slow. You want the reasoning power of frontier models, but your compliance requirements demand the security of an offline spreadsheet. It feels like an impossible trade-off.

The API leakage ceiling

The API leakage ceiling is the hard limit on what you can automate when your most valuable business data is too sensitive to send to third-party cloud models. You automate the easy stuff first. You build Zapier flows to draft marketing copy. You use Claude to summarise generic sales calls. You let AI touch the edges of your business.

But you stop there.

Once you hit core operations, the automation stops. You can't send patient records to OpenAI. You can't pipe unredacted M&A term sheets through a random SaaS wrapper. You can't feed raw payroll data into a public API. The risk is too high. You are legally bound by GDPR, or you have strict client confidentiality agreements, or you simply refuse to hand over your proprietary data to train someone else's model.

Why does this persist? Because most founders assume local AI is a toy. They think running a private model means either settling for a stupid, hallucinating bot on a laptop, or buying a £400,000 Nvidia server rack that requires a dedicated cooling system and three Linux engineers to maintain. They look at the enterprise AI market and see massive data centres. They assume that scale is mandatory.

So you accept the ceiling. You pay humans to do the most tedious, repetitive work simply because humans can sign an NDA. You trap your most expensive talent in data entry roles because the data is too valuable to leave your local network. Your business scales linearly with headcount, while your competitors who play fast and loose with data privacy pull ahead on margin.

Why the obvious fix fails

The obvious fix for local AI fails because SMEs rely on single-machine setups that lack the memory bandwidth to run capable models. When founders try to break through the API leakage ceiling, they make one of two mistakes.

First, they buy an enterprise AI SaaS tool. You pay £50 a seat for a dashboard that promises bank-grade security. But look at the network requests. Under the hood, the tool just wraps your prompt in a JSON payload and fires it off to OpenAI or Anthropic. Yes, the vendors claim they skip training on API data. But your raw data still leaves your network. It crosses the public internet. It sits on a third-party server. If you operate in healthcare, finance, or legal services, that is a failed compliance audit waiting to happen. You are just outsourcing your data breach risk.

Second, they try running a local model on a single machine. You download Ollama on an M2 MacBook Pro. You load a tiny 8-billion parameter model. You ask it to parse a 50-page legal PDF.

In my experience, founders burn 40 to 50 hours trying to force an 8-billion parameter model to do the job of a 120-billion parameter model. It spits out absolute gibberish. It hallucinates clauses that don't exist. It forgets the first half of the document by the time it reads the second half.

Here is the mechanical reality most people miss. Large Language Models aren't bottlenecked by raw compute. They are bottlenecked by memory bandwidth. To get GPT-4 level reasoning, you need models with over 100 billion parameters. A model that size needs about 80GB of RAM just to load into memory, and massive bandwidth to generate text at reading speed.

A single high-end machine hits a hardware wall. Zapier can't help you here. A standard Mac Studio has incredible bandwidth, but it maxes out at 192GB of unified memory. That isn't enough headroom for the biggest models and a massive context window. The model either refuses to load, or it spills over into swap memory and slows down to one word per minute. The system breaks because it starves for memory.

The approach that actually works

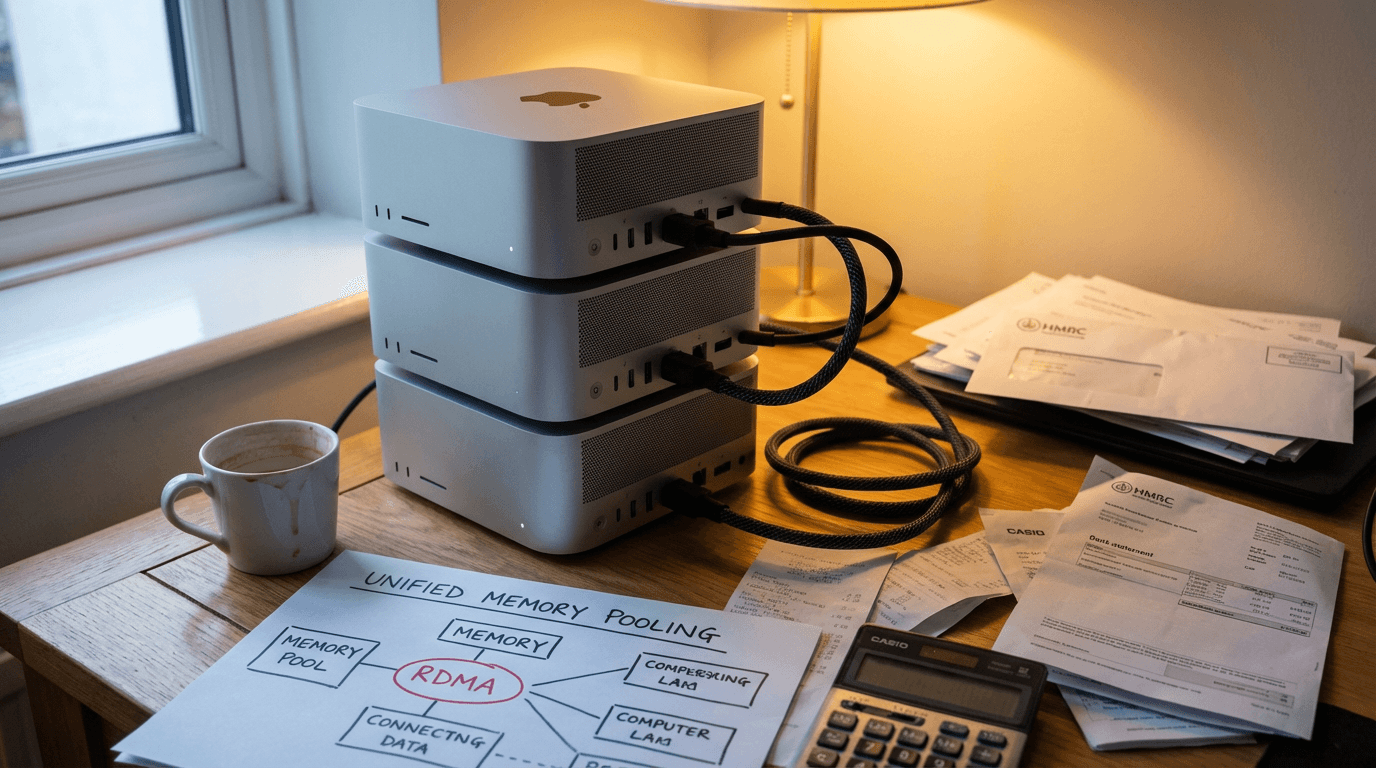

Hardware configuration showing four Mac Studios connected via Thunderbolt 5 cables to pool memory for running massive 100B+ parameter models locally.

You build a private AI server by clustering Mac Studios using RDMA over Thunderbolt 5 to pool unified memory.

In December 2025, Apple released macOS Tahoe 26.2 source. It quietly introduced Remote Direct Memory Access over Thunderbolt 5. This changed the physics of local AI.

Standard networking uses TCP/IP. The CPU has to package, send, receive, and unpack every byte. That adds latency. RDMA allows one Mac to read the memory of another Mac directly, bypassing the CPU entirely. Latency drops to single-digit microseconds.

Here is the exact build. You buy four M3 Ultra Mac Studios. You connect them in a ring topology using standard 2-metre Thunderbolt 5 cables. You install Apple's MLX framework and the open-source EXO 1.0 orchestration layer.

You now have a unified AI supercomputer. It has 768GB of unified memory and 80 Gbps of bandwidth between nodes. It behaves exactly like a massive enterprise server, but it sits quietly on a desk.

Here is a real operational flow. Your accounts alias receives an email with a complex, 10-page supplier invoice. An n8n webhook catches the email. n8n triggers a local API call to your Mac cluster, which is running a massive 120-billion parameter model like Devstral 123B.

The EXO orchestration layer instantly shares the workload across all four machines. The model reads the PDF, extracts the line items, and matches them against your Xero purchase orders. It formats a strict JSON response. n8n receives the JSON and PATCHes the Xero invoice line items.

Your data never left the building. You could unplug your office router from the internet, and the system would still process invoices perfectly.

The cost makes sense. You spend roughly £40,000 on the hardware. Under heavy load, the entire cluster draws about 500W of power. That is less than a commercial coffee machine. You amortise that £40,000 over three years. You are running sovereign, GPT-4 class AI locally for less than the cost of a junior accounts assistant.

The main failure mode here is orchestration timeout. If your n8n instance sits on a slow local server, the webhook might drop the connection before the cluster finishes generating the JSON. You catch this by setting explicit timeout extensions in your n8n HTTP Request nodes and logging every failed run to a dedicated Slack channel. You also need to pin your model versions locally, so an accidental update doesn't break your JSON schemas.

Where this breaks down

This local cluster approach breaks down completely if your input data is unstructured or your internal network lacks basic hygiene.

Local LLMs are text prediction engines. They aren't magic. If your invoices come in as scanned TIFFs from a legacy accounting system, you need OCR first. If you feed a blurry scan directly into a local vision model, the error rate jumps from 1% to around 12%. You'll spend more time fixing the errors than you would have spent typing the data manually.

It also breaks down if you lack basic network hygiene. The Macs need a stable Ethernet connection for management access, entirely separate from the Thunderbolt mesh. If your office network drops packets constantly, your internal API calls will fail. The cluster will sit idle while n8n throws timeout errors.

Check your existing processes before buying hardware. If your ops manager can't currently map out the exact decision tree for approving a contract, an AI model can't do it either. A £40,000 Mac cluster will just automate the generation of mistakes at 25 tokens per second. You need strict, documented workflows before you introduce local compute. Don't buy the hardware hoping it will magically organise your messy back office.

The question isn't whether AI replaces your ops manager. It's whether you know which £32k of her week is actually reconciling Xero against Stripe, because that is the only part a bot can touch this year. If you keep relying on cloud APIs, you will forever be limited by what you are legally allowed to send to California. Building a local Mac cluster changes the physics of your business. You get the reasoning power of frontier models with the data security of a locked filing cabinet. It takes a few weeks to build and costs less than a single bad hire. Stop waiting for Microsoft to build a secure enough wrapper. Buy the hardware, plug in the cables, and run the models yourself.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.