Surviving the Zero-Citation Visibility Collapse in AI-Driven Search

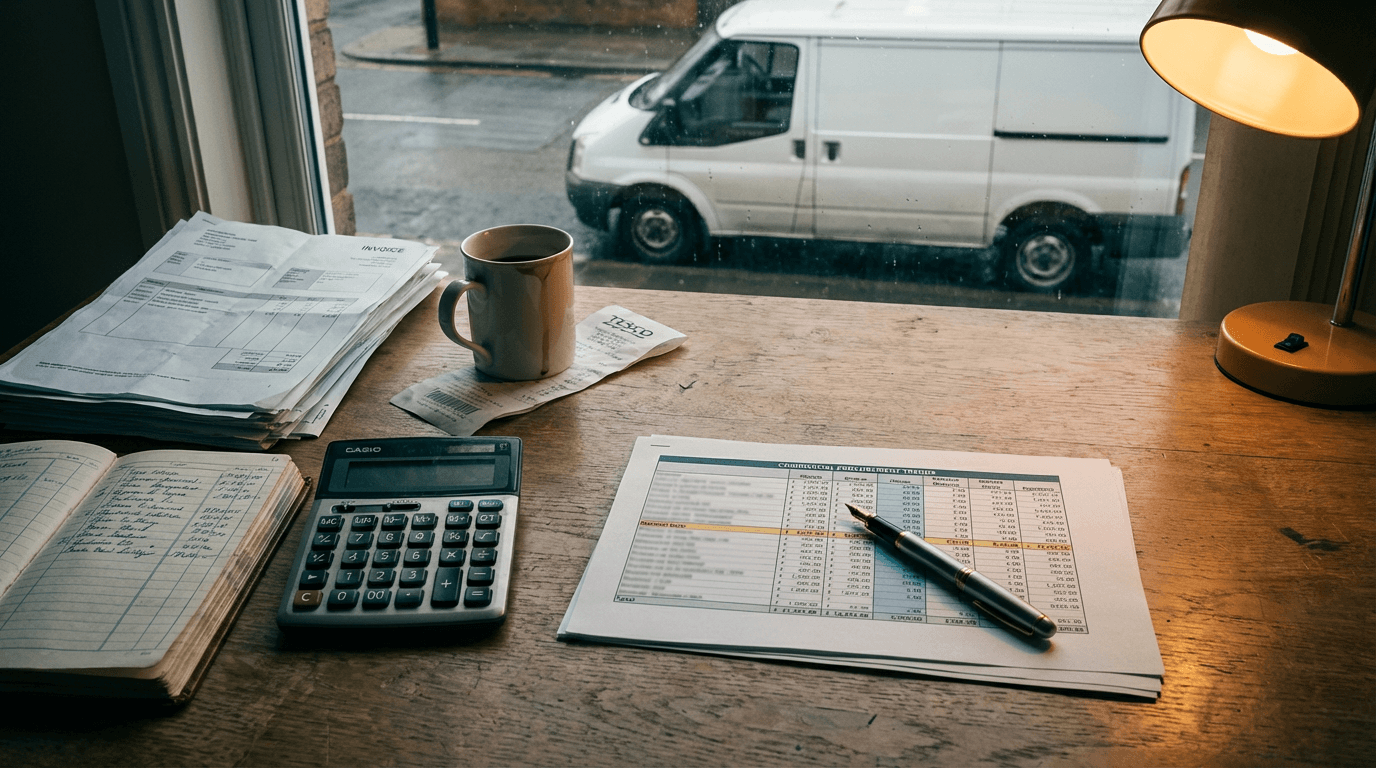

You open Google Analytics on a Tuesday morning. The organic traffic line from October 8th looks like a cliff edge. You haven't changed your content. Your site speed is fine. Your agency is sending you reports about algorithm volatility. But the phone has stopped ringing. The £4,000 a month you spend on SEO is suddenly buying you nothing.

Google rolled out AI Mode to 40+ countries in October 2025 [source](https://www.superprompt.com). The search engine stopped being a library of links and became an answer engine powered by Gemini 2.5. It synthesises answers directly on the page. If you are still playing the traditional keyword game, you are already dead. You need a new mechanism to survive.

The zero-citation visibility collapse

The zero-citation visibility collapse is the sudden loss of search traffic that happens when your business only exists on its own website, causing AI search engines to treat you as an unverified entity.

Before October 2025, you could write a 2,000-word blog post about commercial plumbing, stuff it with keywords, and rank. Google read your site and took your word for it. You controlled the narrative entirely on your own domain.

Now, Gemini 2.5 reads the user's query, understands the intent, and synthesises an answer from multiple sources. It does not trust your domain in isolation. It looks for consensus across the web.

If your plumbing firm claims to be the best, but you aren't mentioned on local forums, supplier directories, or industry news sites, the AI model drops you. You lack multi-source authority. The algorithm treats your self-published claims as low-confidence data.

This collapse hits established SMEs the hardest. You have a 15-year-old business, but your digital footprint is entirely self-published. You pay an agency to write blogs that nobody reads, just to feed the algorithm.

Your marketing manager is panicking. Your sales reps are complaining about lead quality. The CFO is questioning the marketing budget. They all think the website is broken. It isn't. The website is fine. The digital world around it is empty.

But AI Mode ignores self-published claims without external validation. It's a mess. Nobody knows why their traffic died, but this is the mechanism. End of. The days of ranking purely on your own domain authority are over. You are either cited by others, or you are invisible.

Why the obvious fix fails

The obvious fix is throwing more money at the same broken system. Your SEO agency tells you to optimise for AI. They sell you an AI content strategy and bump their retainer to £6k. They use ChatGPT subscriptions to pump out more FAQs, hoping to capture long-tail conversational queries.

Not this. Pumping out more content on your own domain actively hurts you now.

Here's what actually happens: Gemini 2.5 doesn't need your FAQ page. It already knows the answer to how often a boiler needs servicing. When you publish generic AI content, you dilute your site's information density. You become noise.

The failure mode is structural. AI Mode evaluates entities based on Experience, Expertise, Authority, and Trust across the entire web. A £25/month ChatGPT subscription cannot replace a £35k salary's worth of actual industry relationships. The AI models cross-reference claims.

If your site says you use the latest thermal imaging tech, but there is no external proof anywhere else, the model flags your content. No supplier case studies. No forum mentions. No PR. Just your own words. The model skips you.

In my experience reviewing post-October traffic drops, businesses spending £5,000 a month on traditional SEO are seeing zero return. They are feeding a machine that no longer exists.

They try Zapier flows to auto-publish WordPress posts. The webhook fires, the server parses the JSON, the post goes live. And traffic stays flat. Because the problem isn't volume. The problem is validation.

You cannot automate your way out of a trust deficit by talking louder in an empty room. AI search engines are looking for signals from the outside world pointing back at you. If your obvious fix is just writing more words on your own blog, you are accelerating your own irrelevance.

The approach that actually works

The automated data PR pipeline where Xero exports flow through Claude to generate citeable industry reports.

How do you build multi-source authority without a massive PR team? You turn your internal operations into original data that other sites want to cite. You stop writing opinions and start publishing facts.

Take a £5M commercial landscaping firm. Instead of writing a generic blog post about landscaping costs, you use your actual procurement data to generate industry insights.

The input is simple. You take a CSV export from Xero showing your monthly fertiliser and turf costs over the last 24 months.

Here is the operational stack. You drop that CSV into a designated Google Drive folder. A Make scenario watches that folder. It triggers a Claude 3.5 Sonnet API call with a strict JSON schema. The prompt instructs the model to calculate the quarter-over-quarter percentage increase in raw material costs and output a structured summary.

Claude returns the JSON. Make routes this data into Webflow's CMS, automatically drafting a UK Landscaping Material Cost Index page. Simultaneously, Make pushes the key stats into a Slack channel for your sales rep, and drafts a pitch email in Gmail for industry journalists.

You are looking at 2-3 weeks of build time. It costs £4k-£8k depending on how clean your Xero data is and how many endpoints you need to connect.

The result is tangible. Trade magazines and local news sites write about the rising costs of green spaces. They link to your Index. They cite your MD.

When a user asks Google AI Mode why commercial landscaping quotes are so high right now, Gemini synthesises the answer using the trade magazine's article. That article cites your data. Your brand is positioned as the authoritative source in the AI overview. You have beaten the zero-citation visibility collapse.

This isn't a marketing gimmick. It is an operational pivot. You are taking data you already own, processing it with an LLM, and distributing it to platforms that Google trusts. You bypass the SEO agency entirely and become the primary source.

You have to watch for failure modes. The biggest risk is dirty input data. If your accounts assistant miscategorises a £10,000 machinery purchase as fertiliser in Xero, Claude will calculate a 500% price spike.

You catch this by adding a human-in-the-loop step. Make sends an interactive Slack message with the calculated stats. You click approve before the webhook continues to Webflow and Gmail. The system does the heavy lifting, but you own the final validation.

Where this breaks down

This approach is powerful, but it isn't universal. You need to check your operational reality before committing to a data-driven PR engine.

If your business is highly visual and hyper-local, like an emergency locksmith or a bespoke joiner, multi-source data PR isn't the priority. Google Local Services Ads and Google Business Profile reviews still dominate those specific AI Overviews. A cost index won't help you rank for a broken lock at 2 AM.

Also, consider your data maturity. If your core operations are still on paper, this dies on day one. If your invoices come in as scanned TIFFs from legacy suppliers, you need OCR first, and the error rate jumps from 1% to ~12%. You can't run an automated PR engine if your accounts team is manually typing numbers into a spreadsheet every Friday afternoon.

Finally, look at your actual reputation. Generative engine optimisation relies on external sentiment. If your product is terrible and your Trustpilot is a graveyard of 1-star reviews, getting cited more often just feeds the AI model more negative context. The LLM will summarise exactly how bad you are. Fix your service delivery before you try to amplify your brand.

The shift to AI search isn't a temporary glitch you can wait out. The days of treating your website as an isolated island of self-proclaimed expertise are over. The question isn't whether you need to optimise for AI. It's whether you have the operational discipline to extract the hard data hidden in your accounting software, because that is the only currency these new answer engines actually value. Stop paying for generic blog posts that nobody reads. Start publishing the truth about your market. Build systems that push your insights onto the platforms the algorithm already trusts. When the machine looks for an authority to cite, make sure you are the one holding the data.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.