The structural failure of departmental AI and the archipelago tax

Walk through your office right now. Look at the screens.

Your ops manager is paying £25 a month for a ChatGPT subscription to clean up messy supplier lists. Your accounts assistant is running a cheap auto-categoriser plug-in for Xero. Your sales rep is using a Chrome extension to draft follow-up emails in Gmail.

Everyone is busy doing AI. Everyone feels highly productive. The Slack channels are full of screenshots showing how fast a tool wrote a proposal or summarised a meeting.

But look at your P&L. Look at your actual operational output.

The headcount is exactly the same. The time taken to close month-end is exactly the same. The error rate on dispatch is exactly the same.

You are buying software subscriptions, but you aren't buying growth. You are treating AI as a personal productivity tool for individual staff members, not a structural upgrade to your business engine.

It's a mess. Nobody knows why the promised efficiency hasn't arrived. End of.

Here's what actually happens when you let departments buy their own AI tools.

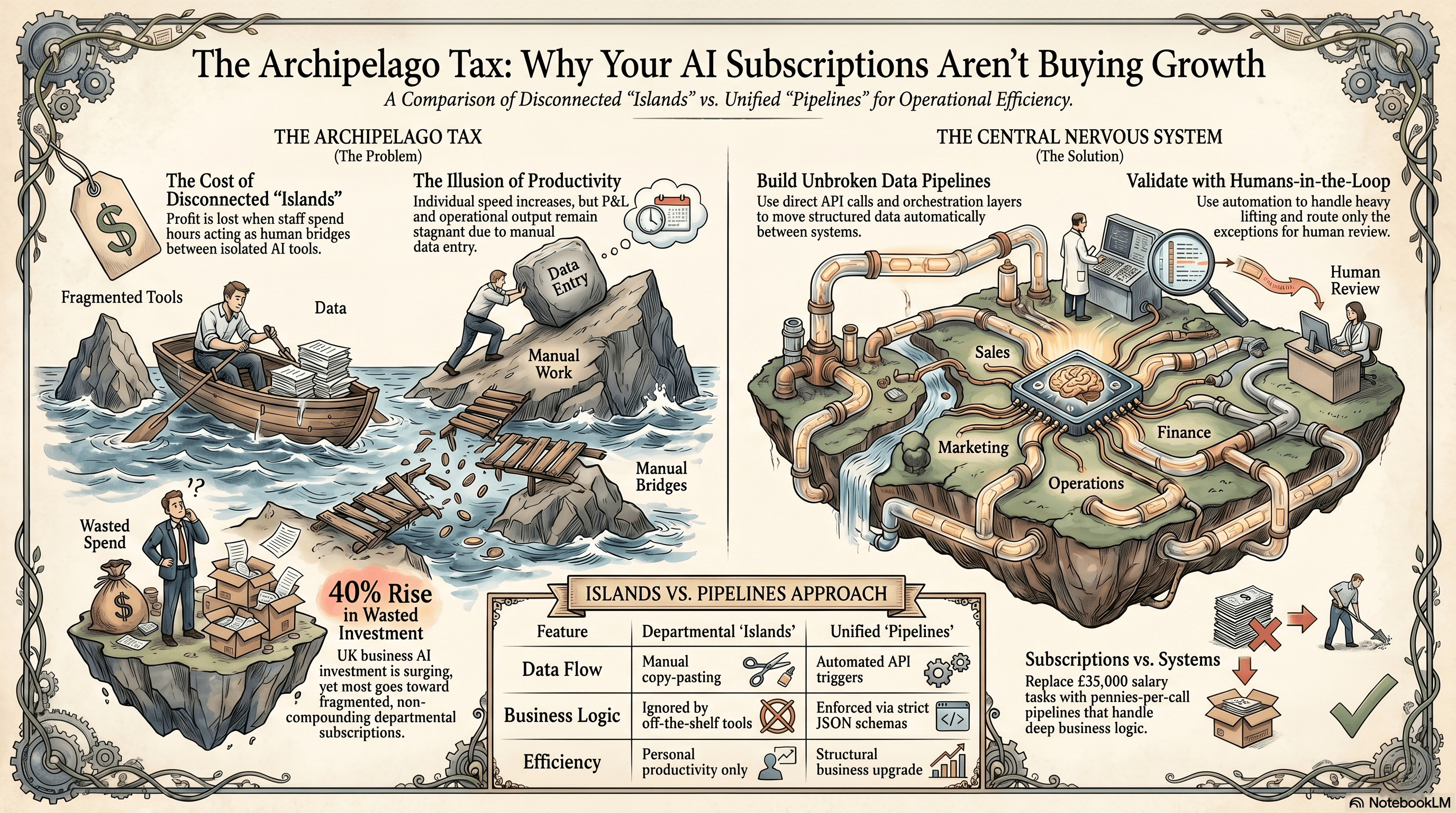

The archipelago tax

The archipelago tax occurs when departments buy isolated AI tools, forcing staff to waste hours acting as human bridges between disconnected software islands.

The archipelago tax is the structural loss of profit you suffer when you buy separate AI tools for different departments instead of building a single connected system. You pay for the software, but you lose the compounding value of shared data.

SAP data shows UK business investment in AI is set to rise by 40 percent next year [source](https://news.sap.com/uk/2025/10/uk-business-investment-in-ai-to-rise-by-40-percent/). I look at that number and wince. Most of that money will be wasted. Founders sign off on a dozen fragmented subscriptions because a department head asked for them. You want to support your team. You want to be a modern business. So you hand over the company card.

The problem is that business value doesn't live inside a single department. A sales win in HubSpot means nothing until it becomes an active project in Notion. That project means nothing until it becomes a paid invoice in Xero.

When your AI tools sit on isolated islands, they can't talk to each other. The ops team cleans data in Claude, then manually copies it into a spreadsheet. The finance team downloads that spreadsheet and feeds it into their own isolated tool.

You haven't removed the manual work. You've just changed the shape of it. The archipelago tax is paid in the hours your staff spend acting as human bridges between disconnected AI tools.

You end up with a faster sales team generating proposals that the delivery team can't read. You get a delivery team generating project data that the finance team has to manually re-type. The speed of the individual islands doesn't matter. The speed of the whole system is dictated by the manual bridges connecting them.

Why the obvious fix fails

The obvious fix fails because stitching disconnected AI tools together with Zapier collapses under the weight of real business logic. People assume they can just connect their fragmented apps and the data will flow. It doesn't.

The standard advice is to use Zapier to glue your new AI subscriptions together. A new lead comes into Pipedrive, Zapier pings ChatGPT to draft an email, and then sends it via Outlook. It sounds great on a whiteboard.

Here's what actually happens. Zapier is built for simple, linear triggers. It can't handle deep, nested data structures. When your Xero supplier has a custom contact field two levels deep, the automation silently writes a null value.

Zapier's Find steps don't nest properly. If a supplier name has a typo, the step fails to match, skips the AI processing, and leaves a blank field. You only notice at month-end when the reconciliation fails.

Now you have a broken system. You're paying for the AI subscriptions. You're paying for the Zapier premium plan. You're paying an accounts assistant to manually check the AI's work. The automation has created more work than it saved. And yes, that's annoying.

The other popular fix is buying a massive, off-the-shelf AI suite that promises to do everything. You buy an enterprise licence for Microsoft 365 Copilot. You hope it'll magically read your messy SharePoint folders and sort your operations.

It fails for the exact same reason. Off-the-shelf AI doesn't know your specific operational rules. It doesn't know that a supplier invoice from Acme Corp needs to be split across three different cost centres based on the project code.

You end up with a very expensive chatbot that gives generic answers about your company data. It can't execute actions. It can't update your databases. It just sits there, costing you money while the underlying manual work remains untouched. A £25 a month ChatGPT subscription can't replace a £35,000 salary, and the mechanism is simple. Consumer AI lacks operational context.

The approach that actually works

A high-efficiency n8n pipeline uses webhooks, strict JSON schemas, and API PATCH requests to automate complex data entry tasks without manual human typing.

A working AI system bypasses consumer subscriptions entirely and uses direct API calls to move data through a single, unbroken pipeline. You stop buying isolated tools and start building a central nervous system.

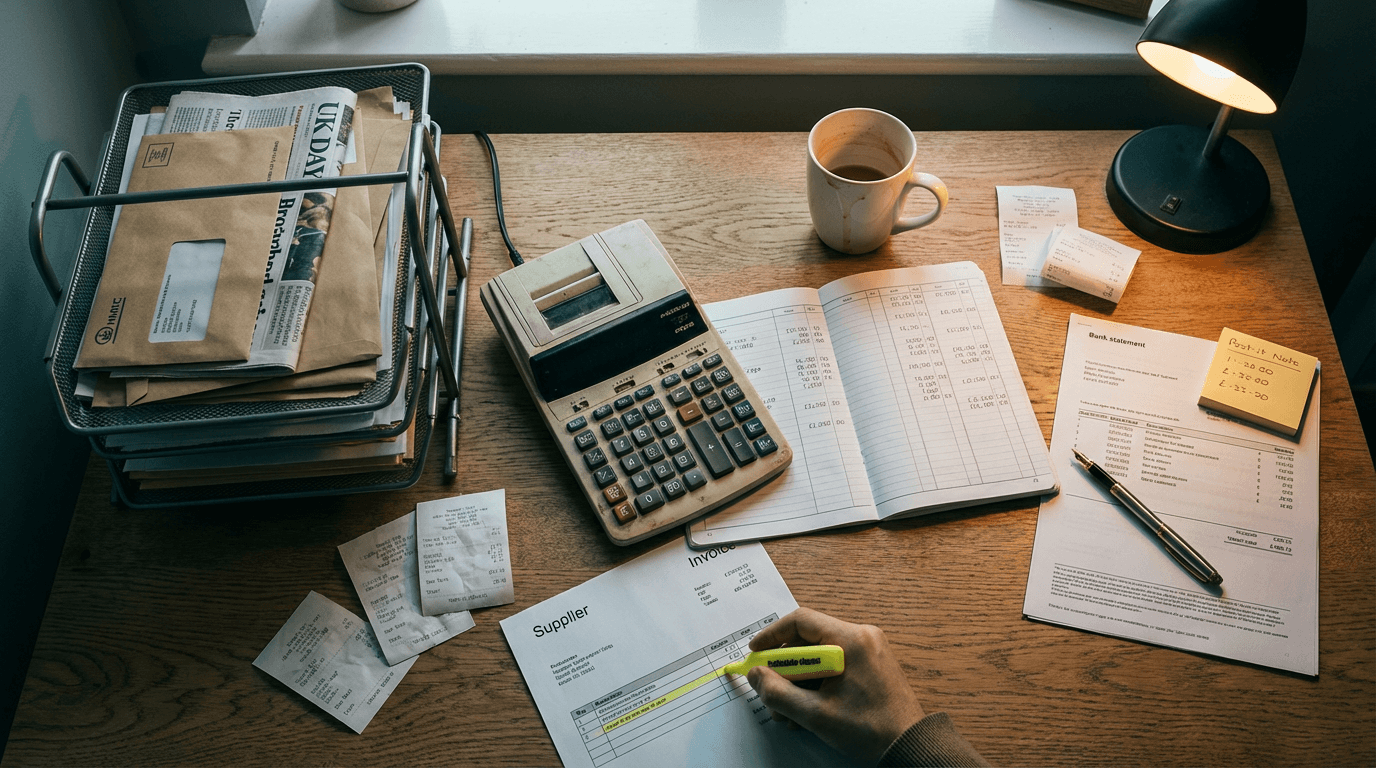

Let's look at a real example of supplier invoice processing. Most SMEs receive a PDF from a supplier via email. A junior analyst manually reads the line items and types them into Xero. The piecemeal approach uses a basic OCR tool that struggles with varied layouts, requiring constant human correction.

The correct approach uses n8n as the orchestration layer. A webhook in n8n triggers the moment an email with a PDF attachment lands in a specific Gmail inbox.

The n8n workflow extracts the PDF and makes a direct API call to Claude. It doesn't ask Claude to just read the document. It passes a strict JSON schema, forcing Claude to return the exact fields you need. You get the supplier name, invoice date, line items, and tax codes every single time.

Because you enforce a JSON schema, the output is perfectly structured. n8n takes that structured data and uses a PATCH request to update the Xero invoice line items directly. No human typing is required. The webhook parses the JSON and updates the database in seconds.

This isn't magic. It's just basic data engineering applied to small business operations.

This is a reliable system. It takes about two to three weeks of build time. Depending on your existing integrations, it costs between £6,000 and £12,000 to set up.

It replaces a £35,000 salary task with a few pence per API call. But you have to handle the failure modes. The most common issue is the AI hallucinating a tax code that doesn't exist in your accounting software.

You catch this by adding a validation step in n8n. Before the workflow sends the PATCH request to Xero, it checks the extracted tax code against a hardcoded list of your actual Xero tax rates.

If it fails the check, it routes the invoice to a Slack channel for human review. The AI does the heavy lifting. The automation handles the routing. The human only steps in for the exceptions.

You can apply this exact same pipeline logic to your sales operations. A customer submits a complex technical query via your website. Instead of a sales rep spending forty minutes researching the answer, n8n queries your internal Supabase database. It passes the technical specs to Claude, and drafts a highly accurate response in Pipedrive.

The human still clicks send, but the research phase drops from forty minutes to four seconds. That's how you buy actual growth. You build pipelines, not islands.

Where this breaks down

This continuous data pipeline breaks down completely if your underlying inputs are locked in legacy formats that require physical human checks. API calls and JSON schemas can't fix paper.

If your invoices come in as scanned TIFFs from a legacy accounting system, you have a problem. The AI can't read a blurry, low-resolution scan with the same accuracy as a digital PDF.

You need to run an OCR layer first to extract the raw text. Once you do this, the error rate jumps from 1 percent to around 12 percent. You suddenly need a human in the loop just to verify the text extraction. This defeats the entire purpose of the pipeline.

You also hit a wall if your process requires physical verification. If your warehouse team needs to open a box, count the physical widgets, and sign a piece of paper, an API call to Claude won't help you.

Don't try to automate a broken physical process. Fix the physical data capture first. Get your suppliers sending digital PDFs. Get your warehouse using tablets to log inventory in Airtable.

Only then should you start building the pipeline. AI is an amplifier. If you feed it a broken, manual process, it'll just break faster.

Three questions to sit with

- How many separate AI subscriptions is your team currently paying for across different departments without a shared data structure?

- When a piece of data is processed by one of these AI tools, does a human have to manually copy the result into your core database?

- If you cancelled every departmental AI subscription tomorrow, would your core operational metrics actually change, or just the feelings of your staff?

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.