Navigating the Shadow Processor Liability in UK-EU Cross-Border Trade

Your sales director forwards an email from your biggest Italian distributor. They are asking for your AI processing addendum. You don't have one. You use ChatGPT to draft quotes and Zapier to push inbound leads into Pipedrive. Nobody in your business thought a local law passed in Rome last October would touch a 40-person engineering firm in Leeds.

But it does. You're now scrambling to map exactly which cloud servers read your European clients' data. You check OpenAI's terms. You check HubSpot's terms. You realise you have no idea where the data actually goes once it leaves your inbox. It's a mess. Nobody knows why. End of.

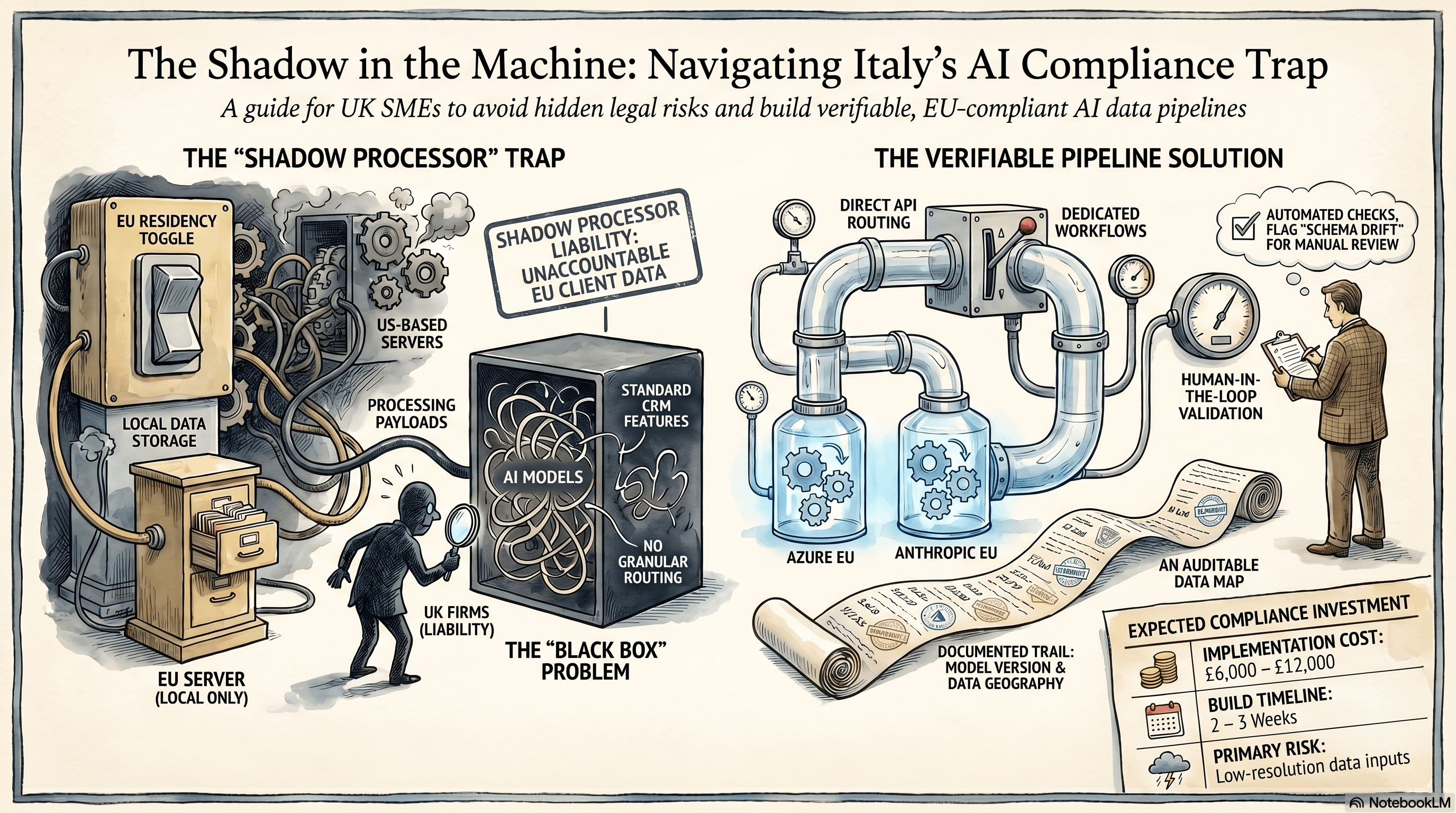

The shadow processor liability

The legal pathway where UK firms become liable for third-party AI processing when handling Italian customer data through unmapped US-based SaaS integrations.

The shadow processor liability is the legal and financial exposure UK businesses carry when their everyday SaaS tools run European client data through unmapped AI models.

You pay for a software subscription. That software uses a language model under the hood. You process a standard purchase order from a client in Milan. You're now legally responsible for how that hidden model handled their commercial data.

Italy's October 2025 AI Governance Framework made this exact scenario a compliance issue [source](https://www.italy-ai-regulation.gov.it). It forces any supplier dealing with Italian companies to document exactly which AI systems touch their operations. It removes the excuse of ignorance.

This hits UK SMEs hard because of cross-border trade. You might think you're just a domestic supplier. But if your components end up in Italian supply chains, or you sell digital services to Italian users, you fall under their data privacy net.

The problem persists because the tools make the processing invisible. When your ops manager clicks "summarise" in Notion, or lets HubSpot draft an email reply, no compliance warning flashes up. The data just leaves your controlled environment.

You are the data controller. The Italian regulators don't care that Zapier or Microsoft built the feature. They care that you used it on their citizens' data without a mapped governance trail. The fines are real, and the loss of client trust is immediate.

Why the vendor compliance toggle is a trap

Relying on your SaaS vendor's data residency toggle is a trap because it only protects data at rest, not the API payloads sent to US servers for processing.

The immediate reaction to the Italy AI law is to check your software vendors' settings. You log into Make or Zapier, find the "EU Data Residency" toggle, and flick it on. You assume this keeps your cross-border trade compliant.

It doesn't. Here's what actually happens.

That toggle only controls where your data sits in a database. It means your Zapier run history is stored on a server in Frankfurt. But when that Zapier flow triggers an OpenAI step, the API payload still fires across the Atlantic to an inference server in California.

The data is processed outside the EU. The shadow processor liability remains entirely yours.

I see this pattern constantly. UK founders assume that because they buy enterprise software, the compliance is handled for them. But the vendor's terms of service explicitly push the liability for API data transfers back onto you.

If an Italian auditor asks for your AI processing map, a screenshot of a Zapier settings page will fail the check. They want to know exactly which model version processed the data, where the inference happened, and whether the data was used for model training.

Standard off-the-shelf automation can't answer those questions. The built-in AI steps in your CRM or helpdesk are black boxes. You can't control the API headers. You can't force a zero-retention policy. You just send the data and hope.

Upgrading to a ChatGPT Team subscription doesn't fix this either. It stops OpenAI training on your data, but it doesn't give you the granular, per-region routing control that the new regulations demand.

Building a compliant, verifiable pipeline

A technical architecture showing n8n routing data through EU-specific API endpoints to maintain full jurisdictional control and a verifiable audit trail.

The only way to guarantee compliance is to replace black-box SaaS features with a dedicated pipeline where you control the API headers and data routing.

You need to prove exactly what happens to an Italian client's data from the second it hits your inbox. Let us walk through a real example: processing inbound PDFs from an Italian supplier into Xero.

Instead of using a generic Zapier email parser, you use n8n hosted on an EU server. The n8n webhook receives the email and extracts the PDF attachment.

n8n then makes a direct API call to Claude 3.5 Sonnet. This is the critical part. Because you are using the raw API, you can enforce strict parameters. You send the PDF with a specific JSON schema to extract the invoice number, line items, and VAT amount.

More importantly, you route this API call through Anthropic's EU endpoints, or you use Microsoft Azure's EU-hosted OpenAI models. You control the geography of the inference. The data never leaves the European jurisdiction.

The n8n workflow receives the JSON response, validates it against your schema, and pushes the clean data into Xero via a standard API POST request.

This approach gives you a complete, auditable trail. If an Italian regulator or a cautious enterprise client asks for your compliance map, you can show them the exact n8n node, the API endpoint, and the zero-retention data agreement with the model provider.

Building this takes about two to three weeks. Expect to spend £6k to £12k depending on how messy your current inbox rules are and whether you need custom Xero tracking categories.

The main failure mode here is schema drift. The AI model might hallucinate a field name, or the supplier might change their invoice layout so drastically that the vision model gets confused.

You catch this by building a validation step in n8n. If the JSON output doesn't perfectly match your required Xero fields, the workflow stops. It routes the invoice to a Slack channel for manual review. It never silently writes bad data to your ledger.

The limits of local processing

This pipeline approach fails completely if your incoming data relies on low-resolution scans or legacy on-premise databases without modern APIs.

The dedicated pipeline is highly effective, but you need to check your raw data quality before you start building.

If your Italian clients communicate entirely via unstructured WhatsApp voice notes, or if they send handwritten delivery dockets scanned as low-resolution TIFF files, this system breaks down.

Language models are good, but they can't perform miracles on terrible inputs. If you feed a blurry, heavily compressed scan into an EU-hosted vision model, your error rate jumps from 1% to something closer to 12%.

At that point, the manual review channel in Slack becomes a bottleneck. Your team spends more time correcting the AI than they would have spent doing the data entry from scratch. And yes, that's annoying.

You also need to check your internal tech stack. If you run a legacy, on-premise ERP system that doesn't have a modern REST API, pushing the extracted data back into your database requires custom middleware.

That adds thousands of pounds to the build cost and months to the timeline. Fix your core data storage and integration points before you worry about AI compliance. Don't build a pristine AI pipeline that dumps data into a broken database.

Three questions to sit with

The regulatory landscape across Europe is tightening rapidly. The casual SaaS tools your team adopted last year will face serious audit scrutiny by the end of this year. Take a hard look at your current operational setup.

- If your largest European client asked for a full, documented map of every AI system that touches their commercial data, could you produce it accurately within 48 hours?

- Are you relying on a software vendor's generic "EU Data Residency" toggle while silently sending raw text payloads to US-based inference servers for processing?

- When an automated data extraction inevitably fails on a weird edge case, does your system silently push empty fields into your accounting software, or does it flag the specific error for human review?

Data compliance isn't about avoiding AI entirely. It's about controlling the underlying plumbing. Get the architecture right, understand exactly where the data flows, and these new regulations become a structural moat against your slower competitors.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.