Beyond the Klarna Headline: Solving the SME Support Crisis with System Architecture

Read the Klarna headline and your first instinct is probably a mix of envy and panic. Two-thirds of customer service chats handled by AI. Two point three million conversations in a single month. A resolution time cut from eleven minutes to two. They built a system that does the work of seven hundred full-time agents, and it actually maintains their customer satisfaction scores [source](https://www.klarna.com/international/press/klarna-ai-assistant-handles-two-thirds-of-customer-service-chats-in-its-first-month/).

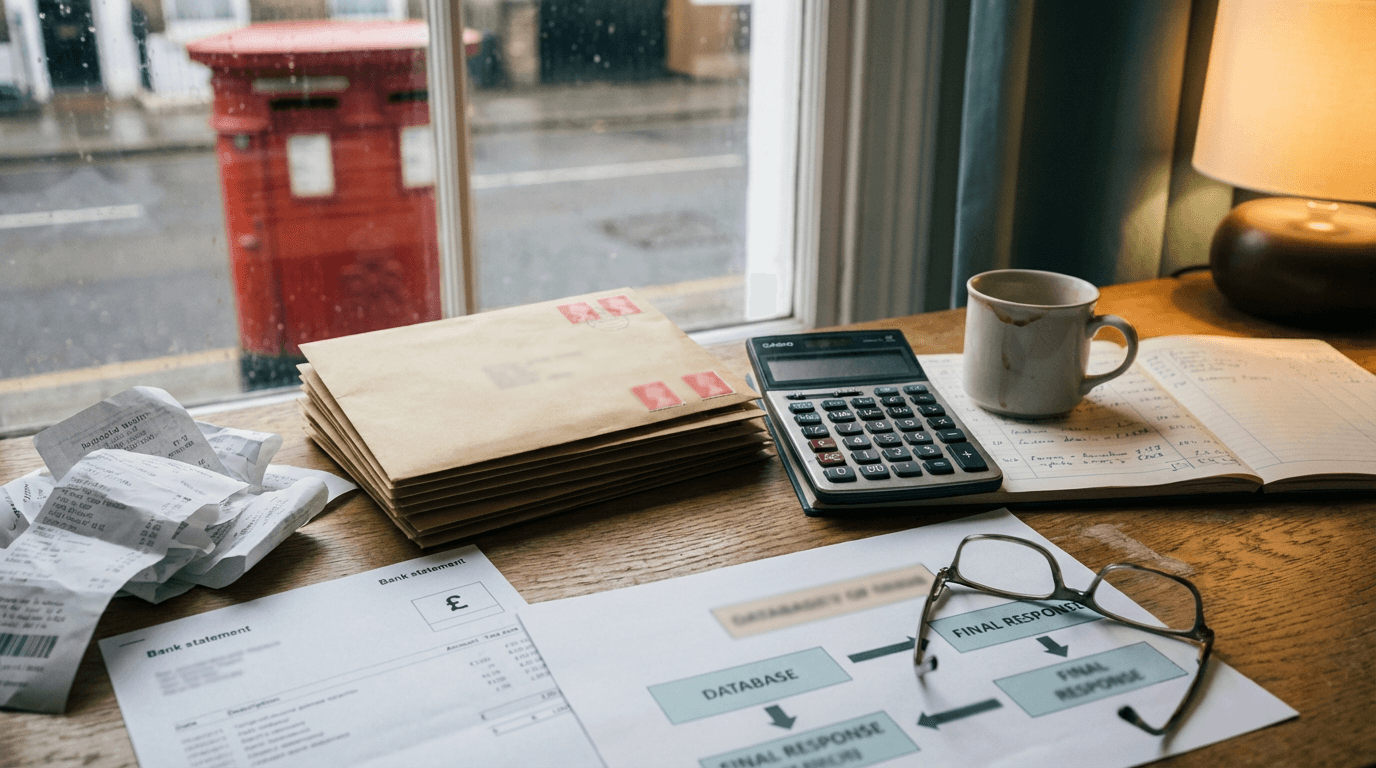

You look at your own support inbox. It's drowning in "Where is my order?" and "Can I change my shipping address?" tickets. Your customer ops lead is exhausted. You pay a team of three to manually cross-reference Zendesk tickets with Shopify order IDs and Stripe payment statuses. The gap between Klarna's automated machine and your manual grind feels unbridgeable.

But the lesson from Klarna isn't about scale. It's about how they structured the data before the AI ever saw a customer message.

The context-free deflection trap

The context-free deflection trap is what happens when a business deploys a chatbot to answer customer queries without giving it direct read-and-write access to the underlying operational databases. This is the exact mistake most UK SMEs make when they try to replicate enterprise AI success. They buy an off-the-shelf AI add-on for their helpdesk, point it at a few PDF FAQs, and expect magic.

It doesn't work. The AI can tell a customer your return policy is thirty days. It can't tell them if their specific return has been processed, because it can't see into your warehouse management system.

Klarna didn't just train a large language model on their help centre articles. They integrated the AI deeply into their proprietary systems. When a customer asks about a refund, the assistant checks the actual ledger. It performs the exact same lookups a human agent would perform.

When an SME owner reads that Klarna expects a forty million dollar profit improvement this year from this system [source](https://www.klarna.com/international/press/klarna-ai-assistant-handles-two-thirds-of-customer-service-chats-in-its-first-month/), they assume the secret is a better language model. It isn't. The secret is system architecture.

Klarna is operating in twenty-three markets and thirty-five languages [source](https://www.klarna.com/international/press/klarna-ai-assistant-handles-two-thirds-of-customer-service-chats-in-its-first-month/). They didn't achieve that by writing thirty-five different versions of a prompt. They achieved it by separating the language translation from the data retrieval. The AI translates the customer's intent into a system query, runs the query, and then translates the raw data back into the customer's native language.

You can't deflect a ticket if the bot doesn't know the answer. If the bot only knows generic policies, the customer just gets frustrated and demands a human. The repeat inquiry rate goes up, not down.

Klarna saw a twenty-five percent drop in repeat inquiries [source](https://www.klarna.com/international/press/klarna-ai-assistant-handles-two-thirds-of-customer-service-chats-in-its-first-month/). That only happens when the machine actually solves the problem on the first try. To do that, the machine needs to know exactly who the customer is, what they bought, and where that item is in the physical world. If you skip the integration layer, you're just building an expensive barrier between your customers and your staff.

Why native helpdesk AI add-ons fail at transactional support

Native helpdesk AI add-ons fail at transactional support because they rely on semantic search across static documents rather than executing API calls to your operational tools. The obvious fix for an SME is to turn on the AI feature inside Zendesk or Intercom. You pay an extra twenty pounds per seat, upload your shipping policy, and assume the tier-one triage is sorted.

The pattern I keep seeing is that these native tools excel at answering "How do I reset my password?" but completely fail at "Why was I charged twice?".

Here's the exact mechanism of that failure. A customer emails to say their subscription box is missing. The native helpdesk AI reads the intent. It searches your knowledge base for "missing box". It replies with a polite, grammatically perfect summary of your lost-in-transit policy.

The customer doesn't care about your policy. They want to know where their specific box is.

To answer that, the system needs to take the customer's email address, query Stripe to confirm the subscription is active, query Shopify to get the latest fulfillment ID, and query the Royal Mail API to get the tracking status. A text-generation tool can't do this on its own. It needs to run a sequence of database queries.

Standard Zapier flows break down here too. Zapier is built for linear triggers and actions. Customer support isn't linear. A customer might ask two questions in one email, or reply to an old thread with a new problem.

If you try to build a Zapier flow that parses an inbound email, extracts an order number, and looks it up, you hit a wall the moment the customer makes a typo. The Zapier 'Find' step fails, the automation silently stops, and the customer sits in the dark.

You end up paying for an AI tool that just acts as an expensive, conversational search bar for your FAQs. The human agents still have to step in to do the actual database lookups. The context-free deflection trap strikes again.

Building a deterministic retrieval system with n8n and Claude

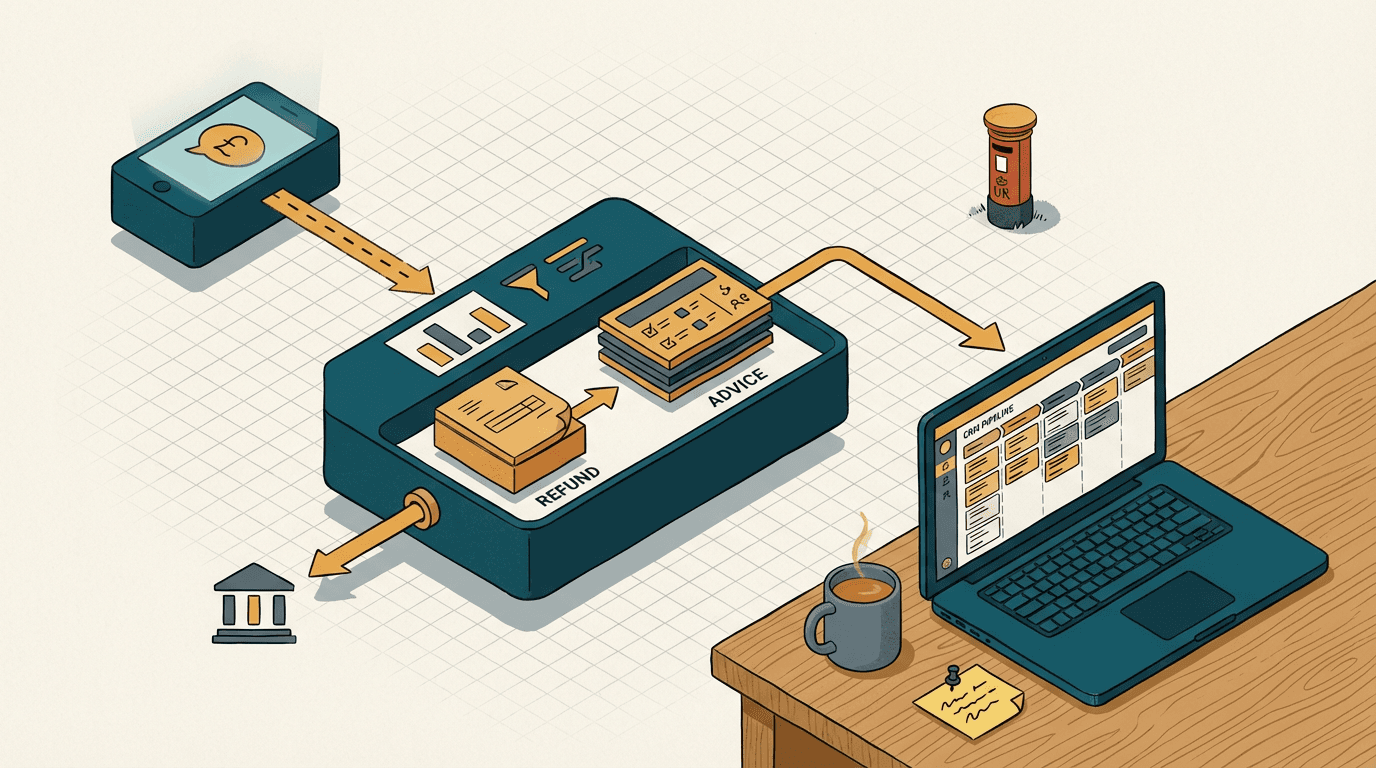

A technical workflow using n8n to verify orders and generate labels, ensuring the AI only communicates based on verified live data.

Building a deterministic retrieval system requires decoupling the language model from the data fetching process, using an orchestration layer like n8n to strictly control what the AI can see and say. You don't let the AI guess the answer. You use the AI to figure out what data to fetch, you fetch it deterministically, and then you use the AI to format the response.

Here's how you actually build a system that resolves tier-one tickets like Klarna does.

First, you route inbound customer emails from Google Workspace or Microsoft 365 into a webhook in n8n.

The first node in n8n sends the raw email text to the Claude 3.5 Sonnet API. You don't ask Claude to reply to the customer. You give Claude a strict JSON schema and ask it to classify the intent and extract key entities. For example, it returns {"intent": "order_status", "order_number": "UK-88492"}.

If the intent is order_status, n8n uses an HTTP Request node to call the Shopify API. It searches for that specific order number.

This is where you catch the errors. If the Shopify API returns a null result, n8n doesn't just give up. It routes to a different branch. It prompts Claude to draft an email saying, "I can't find order UK-88492, did you use a different email address at checkout?"

If the Shopify API finds the order, n8n pulls the fulfillment status and the tracking URL. It then queries your logistics provider's API.

If you want to handle returns, you add another branch. When Claude identifies a return_request intent, n8n queries your Stripe account to verify the original transaction date. If it falls within your thirty-day window, n8n triggers a webhook to your logistics platform to generate a return shipping label, downloads the PDF, and attaches it to the draft email.

Now, n8n has a neat packet of JSON data containing the exact status of the physical item. Only at this point do you send the data back to Claude. The prompt is simple. "You are a customer support agent. The customer asked X. The database says Y. Draft a polite response giving them the exact status. Do not invent any information."

Claude drafts the response. n8n pushes it back into your helpdesk, like HubSpot or Pipedrive, as a draft for a human to approve, or sends it directly via Gmail if you're fully confident.

This setup typically takes two to three weeks to build and test. You should expect to spend between six thousand and twelve thousand pounds on the build, depending on how messy your existing APIs are. The running costs are negligible. You're paying fractions of a penny per API call.

This is how you cut resolution times from eleven minutes to two. You give the language model the exact same tools your human ops manager uses.

The limits of automated operational lookups

Automated operational lookups break down entirely when your underlying data is unstructured, siloed in legacy on-premise software, or reliant on human interpretation. You need to audit your data hygiene before you spend a single pound on orchestration tools.

If your warehouse team updates inventory by scanning items into a modern WMS that has a clean REST API, the n8n flow works beautifully.

If your warehouse team writes tracking numbers on a printed packing slip, scans that slip into a TIFF file, and uploads it to a shared Dropbox folder, the automation dies immediately. You'd need to introduce an OCR step to read the TIFF, which introduces a transcription error rate. A one percent error rate in a database lookup is manageable. A twelve percent error rate from messy handwriting means you're sending incorrect tracking links to angry customers.

The system also fails on complex, multi-variable disputes. If a customer is claiming a partial refund because a product arrived damaged, but they also want to apply a retroactive discount code they forgot to use at checkout, an LLM will struggle to balance the commercial goodwill against your strict financial policies.

You must build routing rules that instantly flag these edge cases and pass them to a human. The goal is to automate the sixty percent of queries that are purely transactional, freeing your human team to handle the nuanced, high-stakes negotiations.

The question isn't whether AI can handle customer service. Klarna has already proved the math on that. The real question is whether your business operations are structured cleanly enough for a machine to navigate them. You can't automate a mess. If your ops manager spends half her day logging into three different portals just to figure out why a shipment bounced, an AI agent will hit the exact same roadblocks, just faster. Fix the data layer first. Expose your core systems via clean APIs. Stop buying generic chatbot wrappers that only know how to read your refund policy. Build a system that can actually read your ledger. That is how you turn a support inbox from a cost centre into a machine that runs itself.

Get our UK AI insights.

Practical reads on AI for UK businesses — teardowns, how-to guides, regulatory news. Unsubscribe anytime.

Unsubscribe anytime.